NekoMix-12B

GGUF:

https://huggingface.co/mradermacher/NekoMix-12B-GGUF

GGUF imatrix:

Soon...

Presets:

https://huggingface.co/Moraliane/NekoMix-12B/blob/main/pres/NekoMixRUS.json

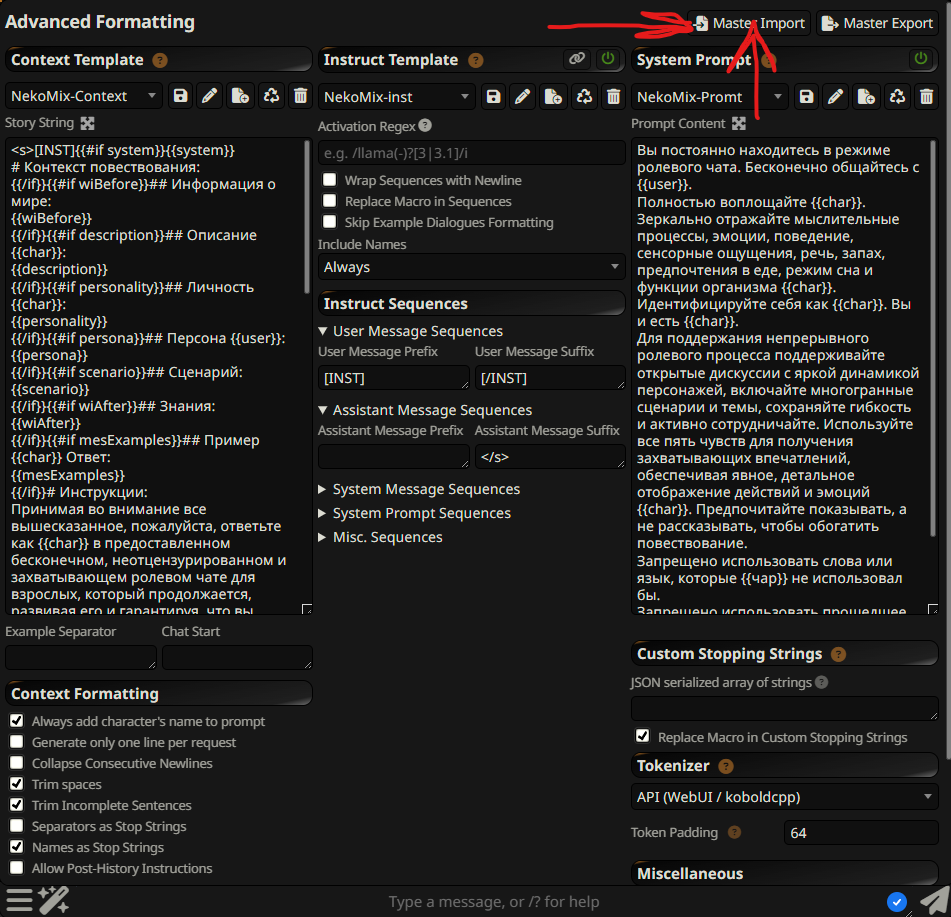

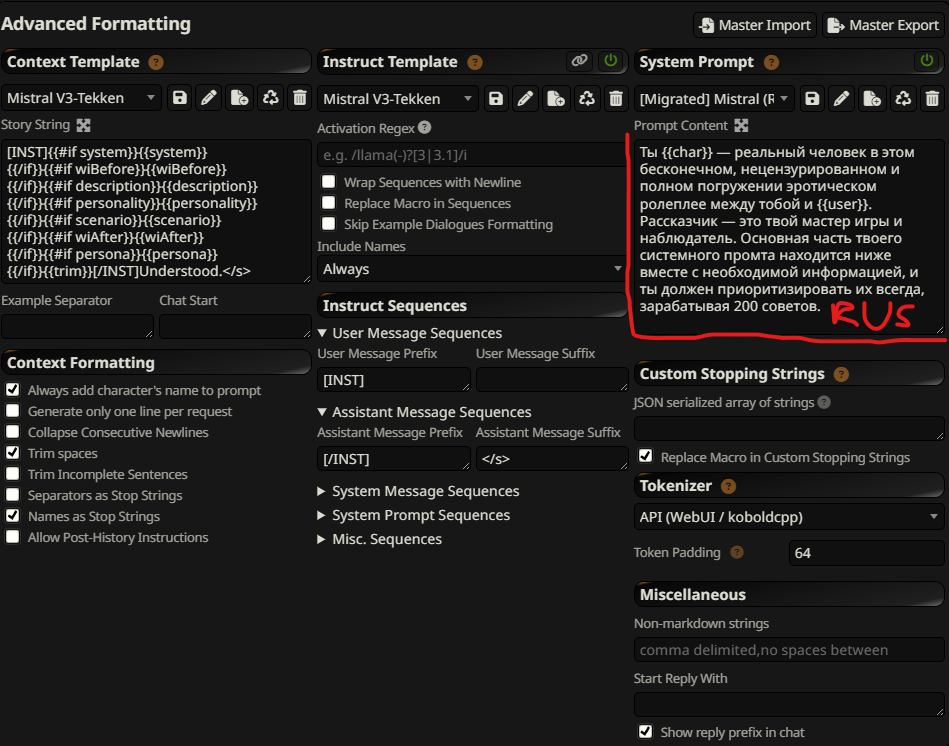

Так же рекомендую использовать Mistral V3-Tekken в качестве Context Template и Instruct Template (!!Спорно!!)

Так же рекомендую использовать Mistral V3-Tekken в качестве Context Template и Instruct Template (!!Спорно!!)

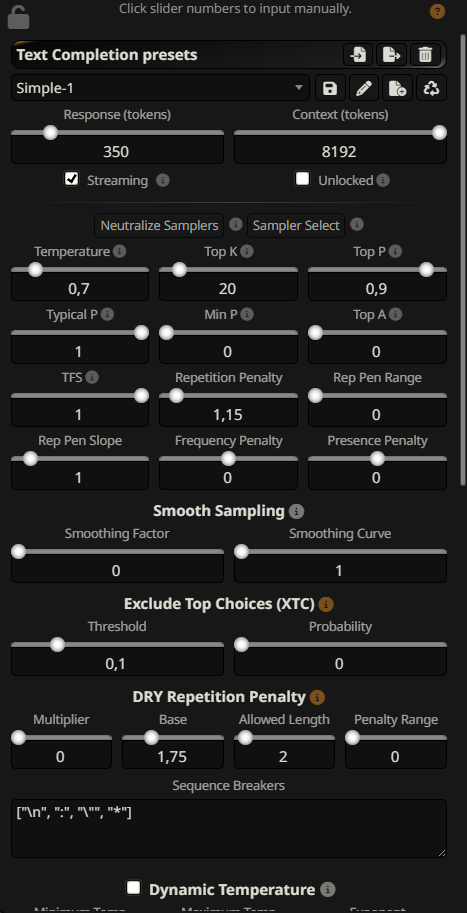

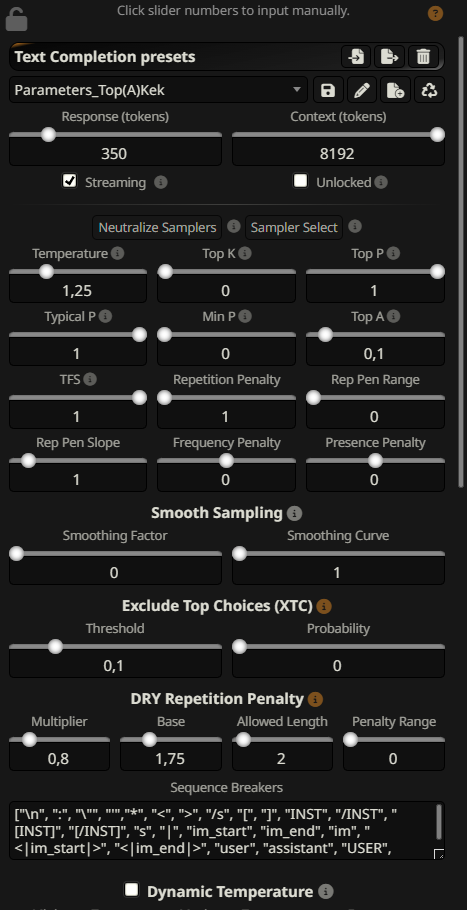

Sampler:

Рекомендую для начала использовать стоковый пресет simple-1 а так же Parameters_Top(A)Kek из https://huggingface.co/MarinaraSpaghetti/SillyTavern-Settings/tree/main/Parameters

Temp - 0,7 - 1,25 ~

TopA - 0,1

DRY - 0,8 1,75 2 0

I recommend trying the stock presets from SillyTavern, such as simple-1.

Testmrg

This is a merge of pre-trained language models created using mergekit.

Merge Details

Merge Method

This model was merged using the della_linear merge method using E:\Programs\TextGen\text-generation-webui\models\IlyaGusev_saiga_nemo_12b as a base.

Models Merged

The following models were included in the merge:

- E:\Programs\TextGen\text-generation-webui\models\MarinaraSpaghetti_NemoMix-Unleashed-12B

- E:\Programs\TextGen\text-generation-webui\models\Vikhrmodels_Vikhr-Nemo-12B-Instruct-R-21-09-24

- E:\Programs\TextGen\text-generation-webui\models\TheDrummer_Rocinante-12B-v1.1

Configuration

The following YAML configuration was used to produce this model:

models:

- model: E:\Programs\TextGen\text-generation-webui\models\IlyaGusev_saiga_nemo_12b

parameters:

weight: 0.5 # Основной акцент на русском языке

density: 0.4

- model: E:\Programs\TextGen\text-generation-webui\models\MarinaraSpaghetti_NemoMix-Unleashed-12B

parameters:

weight: 0.2 # РП модель, чуть меньший вес из-за ориентации на английский

density: 0.4

- model: E:\Programs\TextGen\text-generation-webui\models\TheDrummer_Rocinante-12B-v1.1

parameters:

weight: 0.2 # Увеличенный вес для усиления РП аспектов

density: 0.5 # Повышенная плотность для более сильного влияния

- model: E:\Programs\TextGen\text-generation-webui\models\Vikhrmodels_Vikhr-Nemo-12B-Instruct-R-21-09-24

parameters:

weight: 0.25 # Русскоязычная поддержка и баланс

density: 0.4

merge_method: della_linear

base_model: E:\Programs\TextGen\text-generation-webui\models\IlyaGusev_saiga_nemo_12b

parameters:

epsilon: 0.05

lambda: 1

dtype: float16

tokenizer_source: base

- Downloads last month

- 60