GGUF: Breeze-7B-Instruct-v1_0

Use llama.cpp to convert Breeze-7B-Instruct-v1_0 into 3 GGUF models.

| Name | Quant method | Bits | Size | Use case |

|---|---|---|---|---|

| breeze-7b-instruct-v1_0-q4_k_m.gguf | Q4_K_M | 4 | 4.54 GB | medium, balanced quality - recommended |

| breeze-7b-instruct-v1_0-q5_k_m.gguf | Q5_K_M | 5 | 5.32 GB | large, very low quality loss - recommended |

| breeze-7b-instruct-v1_0-q6_k.gguf | Q6_K | 6 | 6.14 GB | very large, extremely low quality loss |

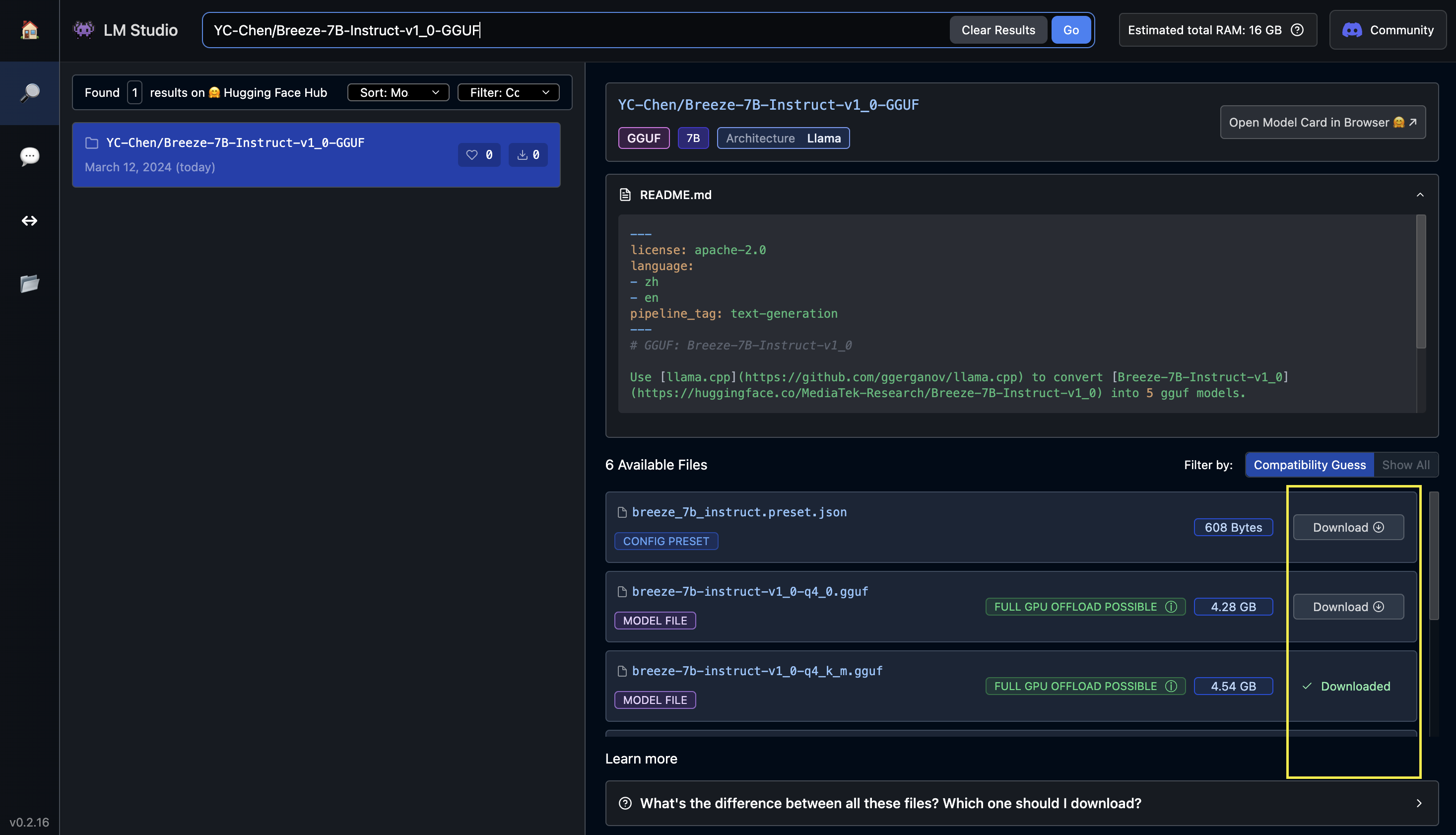

How to locally use those models by UI

Download the app from LM Studio

Search "YC-Chen/Breeze-7B-Instruct-v1_0-GGUF"

- Download the preset

breeze_7b_instruct.preset.jsonand the ggufbreeze-7b-instruct-v1_0-q6_k.gguf

- Choose the right model/preset and start conversation

How to locally use those models by Python codes

- Install ctransformers

Run one of the following commands, according to your system:

# Base ctransformers with no GPU acceleration

pip install ctransformers

# Or with CUDA GPU acceleration

pip install ctransformers[cuda]

# Or with AMD ROCm GPU acceleration (Linux only)

CT_HIPBLAS=1 pip install ctransformers --no-binary ctransformers

# Or with Metal GPU acceleration for macOS systems only

CT_METAL=1 pip install ctransformers --no-binary ctransformers

- Simple code

from ctransformers import AutoModelForCausalLM

# Set gpu_layers to the number of layers to offload to GPU. Set to 0 if no GPU acceleration is available on your system.

llm = AutoModelForCausalLM.from_pretrained(

"YC-Chen/Breeze-7B-Instruct-v1_0-GGUF",

model_file="breeze-7b-instruct-v1_0-q6_k.gguf",

model_type="mistral",

context_length=8000,

gpu_layers=50)

from transformers import AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained("MediaTek-Research/Breeze-7B-Instruct-v1_0")

gen_kwargs = dict(

max_new_tokens=1024,

repetition_penalty=1.1,

stop=["[INST]"],

temperature=0.0,

top_p=0.0,

top_k=1,

)

chat = [

{"role": "system", "content": "You are a helpful AI assistant built by MediaTek Research. The user you are helping speaks Traditional Chinese and comes from Taiwan."},

{"role": "user", "content": "請介紹五樣台灣小吃"}

]

for text in llm(tokenizer.apply_chat_template(chat, tokenize=False), stream=True, **gen_kwargs):

print(text, end="", flush=True)

# 以下推薦五樣台灣的小吃:

#

# 1. 蚵仔煎 (Oyster omelette) - 蚵仔煎是一種以蛋、麵皮和蚵仔為主要食材的傳統美食。它通常在油鍋中煎至金黃色,外酥內嫩,並帶有一股獨特的香氣。蚵仔煎是一道非常受歡迎的小吃,經常可以在夜市或小吃店找到。

# 2. 牛肉麵 (Beef noodle soup) - 牛肉麵是台灣的經典美食之一,它以軟嫩的牛肉和濃郁的湯頭聞名。不同地區的牛肉麵可能有不同的口味和配料,但通常都會包含麵條、牛肉、蔬菜和調味料。牛肉麵在全台灣都有不少知名店家,例如林東芳牛肉麵、牛大哥牛肉麵等。

# 3. 鹹酥雞 (Fried chicken) - 鹹酥雞是一種以雞肉為主要食材的快餐。它通常會經過油炸處理,然後搭配多種蔬菜和調味料。鹹酥雞的口味因地區而異,但通常都會有辣、甜、鹹等不同風味。鹹酥雞經常可以在夜市或路邊攤找到,例如鼎王鹹酥雞、鹹酥G去等知名店家。

# 4. 珍珠奶茶 (Bubble tea) - 珍珠奶茶是一種以紅茶為基底的飲品,加入珍珠(Q彈的小湯圓)和鮮奶。它起源於台灣,並迅速成為全球流行的飲料。珍珠奶茶在全台灣都有不少知名品牌,例如茶湯會、五桐號等。

# 5. 臭豆腐 (Stinky tofu) - 臭豆腐是一種以發酵豆腐為原料製作的傳統小吃。它具有強烈的氣味,但味道獨特且深受台灣人喜愛。臭豆腐通常會搭配多種調味料和配料,例如辣椒醬、蒜泥、酸菜等。臭豆腐在全台灣都有不少知名店家,例如阿宗麵線、大勇街臭豆腐等。

- Downloads last month

- 459

Inference Providers

NEW

This model is not currently available via any of the supported Inference Providers.

The model cannot be deployed to the HF Inference API:

The model has no library tag.