Phi-2 function calling

This is a merged model of the https://huggingface.co/cognitivecomputations/dolphin-2_6-phi-2 and sft function calling lora here

The sft dataset is https://huggingface.co/datasets/Yhyu13/glaive-function-calling-v2-llama-factory-convert, which I converted for llama_factory from original dataset https://huggingface.co/datasets/glaiveai/glaive-function-calling-v2

This model is acompanied with pr on textgen-webui to enable its function calling ability like GPTs: Add function calling ability to openai extension

Caution

Do not use this model in production, phi-2 has limited prompt following ability in terms of function calling.

Please checkout my best function calling model here https://huggingface.co/Yhyu13/dolphin-2.6-mistral-7b-dpo-laser-function-calling

Detail

The function calling is wrapped in simple xml tag for eaiser identification.

<functioncall> {\"name\": \"calculate_loan_payment\", \"arguments\": '{\"principal\": 50000, \"interest_rate\": 5, \"loan_term\": 10}'} </functioncall>

that can be extracted like this

import re

import json

input_str = "<functioncall> {\"name\": \"calculate_loan_payment\", \"arguments\": '{\"principal\": 50000, \"interest_rate\": 5, \"loan_term\": 10}'} </functioncall>"

# Define the pattern to match the JSON string within the functioncall tags

pattern = r'<functioncall>(.*?)</functioncall>'

# Use re.search to find the matched pattern

match = re.search(pattern, input_str, re.DOTALL)

if match:

json_str = match.group(1)

# Remove the single quotes surrounding the inner JSON string

json_str = json_str.replace("'", "")

# Load the JSON string into a Python dictionary

json_data = json.loads(json_str)

print(json_data)

else:

print("No match found.")

Or, if you want to faithfully keep the single quotes that wrapps the arguments value (where openai does it like this, which makes json.loads fail shortly on the original json_str), use ast.literal_eval for the rescue.

if match:

import ast

json_str = match.group(1)

json_str = json_str.strip()

"""

https://www.datasciencebyexample.com/2023/03/16/what-to-do-when-single-quotes-in-json-string/

"""

json_dict = ast.literal_eval(json_str)

print(json_dict['name'], json_dict['arguments'])

else:

print("No match found.")

Hopefully, this model can be a drop-in replacement for apps (e.g. memgpt) that require function calling ability from LLMs.

Another note on interpreting function call result:

Function response has been put between <functionresponse> in order to be identified as a function call result (which could be evaluted behind the scene, and its result in principle should be interpreted as part of the user input), which then will be processed by the assistant for form a conversational response.

<functionresponse> jons_str </functionresponse>

Result

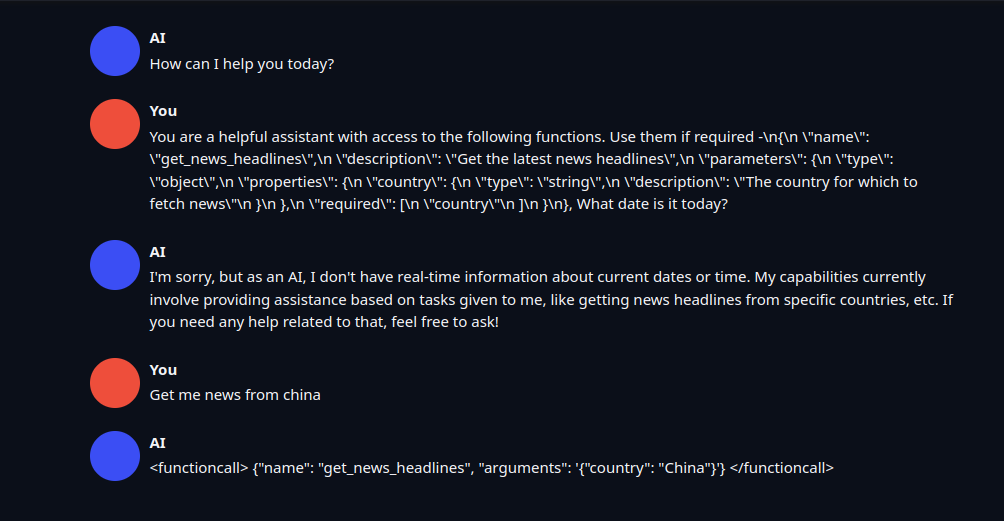

Prompt:

SYSTEM: You are a helpful assistant with access to the following functions. Use them if required -\n{\n \"name\": \"get_news_headlines\",\n \"description\": \"Get the latest news headlines\",\n \"parameters\": {\n \"type\": \"object\",\n \"properties\": {\n \"country\": {\n \"type\": \"string\",\n \"description\": \"The country for which to fetch news\"\n }\n },\n \"required\": [\n \"country\"\n ]\n }\n}\n\nWhat date is it today?"

Prompt:

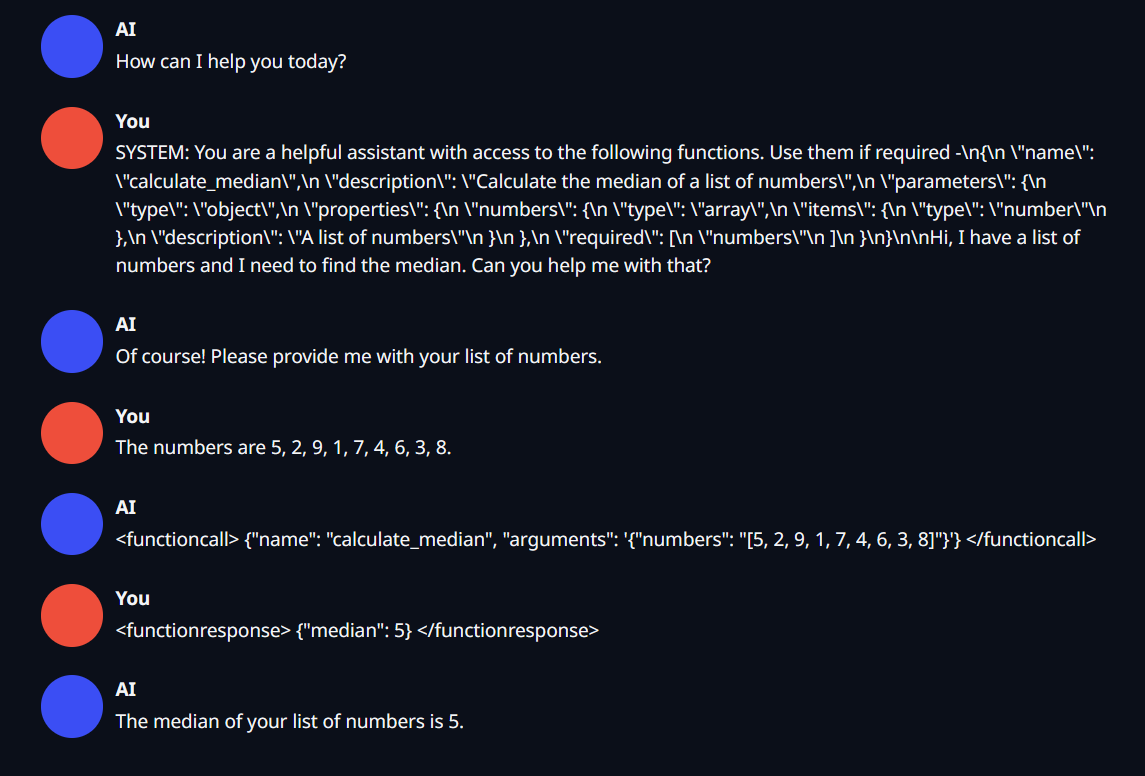

SYSTEM: You are a helpful assistant with access to the following functions. Use them if required -\n{\n \"name\": \"calculate_median\",\n \"description\": \"Calculate the median of a list of numbers\",\n \"parameters\": {\n \"type\": \"object\",\n \"properties\": {\n \"numbers\": {\n \"type\": \"array\",\n \"items\": {\n \"type\": \"number\"\n },\n \"description\": \"A list of numbers\"\n }\n },\n \"required\": [\n \"numbers\"\n ]\n }\n}\n\nHi, I have a list of numbers and I need to find the median. Can you help me with that?

Function response:

<functionresponse> {"median": 5} </functionresponse>

- Downloads last month

- 123