modelId

stringlengths 5

122

| author

stringlengths 2

42

| last_modified

unknown | downloads

int64 0

738M

| likes

int64 0

11k

| library_name

stringclasses 245

values | tags

sequencelengths 1

4.05k

| pipeline_tag

stringclasses 48

values | createdAt

unknown | card

stringlengths 1

901k

|

|---|---|---|---|---|---|---|---|---|---|

THUDM/chatglm2-6b | THUDM | "2023-10-09T08:19:27Z" | 437,064 | 2,010 | transformers | [

"transformers",

"pytorch",

"chatglm",

"glm",

"thudm",

"custom_code",

"zh",

"en",

"arxiv:2103.10360",

"arxiv:2210.02414",

"arxiv:1911.02150",

"endpoints_compatible",

"region:us"

] | null | "2023-06-24T16:26:27Z" | ---

language:

- zh

- en

tags:

- glm

- chatglm

- thudm

---

# ChatGLM2-6B

<p align="center">

💻 <a href="https://github.com/THUDM/ChatGLM2-6B" target="_blank">Github Repo</a> • 🐦 <a href="https://twitter.com/thukeg" target="_blank">Twitter</a> • 📃 <a href="https://arxiv.org/abs/2103.10360" target="_blank">[GLM@ACL 22]</a> <a href="https://github.com/THUDM/GLM" target="_blank">[GitHub]</a> • 📃 <a href="https://arxiv.org/abs/2210.02414" target="_blank">[GLM-130B@ICLR 23]</a> <a href="https://github.com/THUDM/GLM-130B" target="_blank">[GitHub]</a> <br>

</p>

<p align="center">

👋 Join our <a href="https://join.slack.com/t/chatglm/shared_invite/zt-1y7pqoloy-9b1g6T6JjA8J0KxvUjbwJw" target="_blank">Slack</a> and <a href="https://github.com/THUDM/ChatGLM-6B/blob/main/resources/WECHAT.md" target="_blank">WeChat</a>

</p>

<p align="center">

📍Experience the larger-scale ChatGLM model at <a href="https://www.chatglm.cn">chatglm.cn</a>

</p>

## 介绍

ChatGLM**2**-6B 是开源中英双语对话模型 [ChatGLM-6B](https://github.com/THUDM/ChatGLM-6B) 的第二代版本,在保留了初代模型对话流畅、部署门槛较低等众多优秀特性的基础之上,ChatGLM**2**-6B 引入了如下新特性:

1. **更强大的性能**:基于 ChatGLM 初代模型的开发经验,我们全面升级了 ChatGLM2-6B 的基座模型。ChatGLM2-6B 使用了 [GLM](https://github.com/THUDM/GLM) 的混合目标函数,经过了 1.4T 中英标识符的预训练与人类偏好对齐训练,[评测结果](#评测结果)显示,相比于初代模型,ChatGLM2-6B 在 MMLU(+23%)、CEval(+33%)、GSM8K(+571%) 、BBH(+60%)等数据集上的性能取得了大幅度的提升,在同尺寸开源模型中具有较强的竞争力。

2. **更长的上下文**:基于 [FlashAttention](https://github.com/HazyResearch/flash-attention) 技术,我们将基座模型的上下文长度(Context Length)由 ChatGLM-6B 的 2K 扩展到了 32K,并在对话阶段使用 8K 的上下文长度训练,允许更多轮次的对话。但当前版本的 ChatGLM2-6B 对单轮超长文档的理解能力有限,我们会在后续迭代升级中着重进行优化。

3. **更高效的推理**:基于 [Multi-Query Attention](http://arxiv.org/abs/1911.02150) 技术,ChatGLM2-6B 有更高效的推理速度和更低的显存占用:在官方的模型实现下,推理速度相比初代提升了 42%,INT4 量化下,6G 显存支持的对话长度由 1K 提升到了 8K。

4. **更开放的协议**:ChatGLM2-6B 权重对学术研究**完全开放**,在填写[问卷](https://open.bigmodel.cn/mla/form)进行登记后**亦允许免费商业使用**。

ChatGLM**2**-6B is the second-generation version of the open-source bilingual (Chinese-English) chat model [ChatGLM-6B](https://github.com/THUDM/ChatGLM-6B). It retains the smooth conversation flow and low deployment threshold of the first-generation model, while introducing the following new features:

1. **Stronger Performance**: Based on the development experience of the first-generation ChatGLM model, we have fully upgraded the base model of ChatGLM2-6B. ChatGLM2-6B uses the hybrid objective function of [GLM](https://github.com/THUDM/GLM), and has undergone pre-training with 1.4T bilingual tokens and human preference alignment training. The [evaluation results](README.md#evaluation-results) show that, compared to the first-generation model, ChatGLM2-6B has achieved substantial improvements in performance on datasets like MMLU (+23%), CEval (+33%), GSM8K (+571%), BBH (+60%), showing strong competitiveness among models of the same size.

2. **Longer Context**: Based on [FlashAttention](https://github.com/HazyResearch/flash-attention) technique, we have extended the context length of the base model from 2K in ChatGLM-6B to 32K, and trained with a context length of 8K during the dialogue alignment, allowing for more rounds of dialogue. However, the current version of ChatGLM2-6B has limited understanding of single-round ultra-long documents, which we will focus on optimizing in future iterations.

3. **More Efficient Inference**: Based on [Multi-Query Attention](http://arxiv.org/abs/1911.02150) technique, ChatGLM2-6B has more efficient inference speed and lower GPU memory usage: under the official implementation, the inference speed has increased by 42% compared to the first generation; under INT4 quantization, the dialogue length supported by 6G GPU memory has increased from 1K to 8K.

4. **More Open License**: ChatGLM2-6B weights are **completely open** for academic research, and **free commercial use** is also allowed after completing the [questionnaire](https://open.bigmodel.cn/mla/form).

## 软件依赖

```shell

pip install protobuf transformers==4.30.2 cpm_kernels torch>=2.0 gradio mdtex2html sentencepiece accelerate

```

## 代码调用

可以通过如下代码调用 ChatGLM-6B 模型来生成对话:

```ipython

>>> from transformers import AutoTokenizer, AutoModel

>>> tokenizer = AutoTokenizer.from_pretrained("THUDM/chatglm2-6b", trust_remote_code=True)

>>> model = AutoModel.from_pretrained("THUDM/chatglm2-6b", trust_remote_code=True).half().cuda()

>>> model = model.eval()

>>> response, history = model.chat(tokenizer, "你好", history=[])

>>> print(response)

你好👋!我是人工智能助手 ChatGLM-6B,很高兴见到你,欢迎问我任何问题。

>>> response, history = model.chat(tokenizer, "晚上睡不着应该怎么办", history=history)

>>> print(response)

晚上睡不着可能会让你感到焦虑或不舒服,但以下是一些可以帮助你入睡的方法:

1. 制定规律的睡眠时间表:保持规律的睡眠时间表可以帮助你建立健康的睡眠习惯,使你更容易入睡。尽量在每天的相同时间上床,并在同一时间起床。

2. 创造一个舒适的睡眠环境:确保睡眠环境舒适,安静,黑暗且温度适宜。可以使用舒适的床上用品,并保持房间通风。

3. 放松身心:在睡前做些放松的活动,例如泡个热水澡,听些轻柔的音乐,阅读一些有趣的书籍等,有助于缓解紧张和焦虑,使你更容易入睡。

4. 避免饮用含有咖啡因的饮料:咖啡因是一种刺激性物质,会影响你的睡眠质量。尽量避免在睡前饮用含有咖啡因的饮料,例如咖啡,茶和可乐。

5. 避免在床上做与睡眠无关的事情:在床上做些与睡眠无关的事情,例如看电影,玩游戏或工作等,可能会干扰你的睡眠。

6. 尝试呼吸技巧:深呼吸是一种放松技巧,可以帮助你缓解紧张和焦虑,使你更容易入睡。试着慢慢吸气,保持几秒钟,然后缓慢呼气。

如果这些方法无法帮助你入睡,你可以考虑咨询医生或睡眠专家,寻求进一步的建议。

```

关于更多的使用说明,包括如何运行命令行和网页版本的 DEMO,以及使用模型量化以节省显存,请参考我们的 [Github Repo](https://github.com/THUDM/ChatGLM2-6B)。

For more instructions, including how to run CLI and web demos, and model quantization, please refer to our [Github Repo](https://github.com/THUDM/ChatGLM2-6B).

## Change Log

* v1.0

## 协议

本仓库的代码依照 [Apache-2.0](LICENSE) 协议开源,ChatGLM2-6B 模型的权重的使用则需要遵循 [Model License](MODEL_LICENSE)。

## 引用

如果你觉得我们的工作有帮助的话,请考虑引用下列论文,ChatGLM2-6B 的论文会在近期公布,敬请期待~

```

@article{zeng2022glm,

title={Glm-130b: An open bilingual pre-trained model},

author={Zeng, Aohan and Liu, Xiao and Du, Zhengxiao and Wang, Zihan and Lai, Hanyu and Ding, Ming and Yang, Zhuoyi and Xu, Yifan and Zheng, Wendi and Xia, Xiao and others},

journal={arXiv preprint arXiv:2210.02414},

year={2022}

}

```

```

@inproceedings{du2022glm,

title={GLM: General Language Model Pretraining with Autoregressive Blank Infilling},

author={Du, Zhengxiao and Qian, Yujie and Liu, Xiao and Ding, Ming and Qiu, Jiezhong and Yang, Zhilin and Tang, Jie},

booktitle={Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers)},

pages={320--335},

year={2022}

}

``` |

mistralai/Mistral-7B-Instruct-v0.3 | mistralai | "2024-06-24T08:30:57Z" | 434,372 | 751 | transformers | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"conversational",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | "2024-05-22T09:57:04Z" | ---

license: apache-2.0

---

# Model Card for Mistral-7B-Instruct-v0.3

###

> [!IMPORTANT]

> ❗

> We recommend using `mistral_common` for tokenization as the transformers tokenizer has not been tested by the Mistral team and might give incorrect results.

---

The Mistral-7B-Instruct-v0.3 Large Language Model (LLM) is an instruct fine-tuned version of the Mistral-7B-v0.3.

Mistral-7B-v0.3 has the following changes compared to [Mistral-7B-v0.2](https://huggingface.co/mistralai/Mistral-7B-Instruct-v0.2/edit/main/README.md)

- Extended vocabulary to 32768

- Supports v3 Tokenizer

- Supports function calling

## Installation

It is recommended to use `mistralai/Mistral-7B-Instruct-v0.3` with [mistral-inference](https://github.com/mistralai/mistral-inference). For HF transformers code snippets, please keep scrolling.

```

pip install mistral_inference

```

## Download

```py

from huggingface_hub import snapshot_download

from pathlib import Path

mistral_models_path = Path.home().joinpath('mistral_models', '7B-Instruct-v0.3')

mistral_models_path.mkdir(parents=True, exist_ok=True)

snapshot_download(repo_id="mistralai/Mistral-7B-Instruct-v0.3", allow_patterns=["params.json", "consolidated.safetensors", "tokenizer.model.v3"], local_dir=mistral_models_path)

```

### Chat

After installing `mistral_inference`, a `mistral-chat` CLI command should be available in your environment. You can chat with the model using

```

mistral-chat $HOME/mistral_models/7B-Instruct-v0.3 --instruct --max_tokens 256

```

### Instruct following

```py

from mistral_inference.model import Transformer

from mistral_inference.generate import generate

from mistral_common.tokens.tokenizers.mistral import MistralTokenizer

from mistral_common.protocol.instruct.messages import UserMessage

from mistral_common.protocol.instruct.request import ChatCompletionRequest

tokenizer = MistralTokenizer.from_file(f"{mistral_models_path}/tokenizer.model.v3")

model = Transformer.from_folder(mistral_models_path)

completion_request = ChatCompletionRequest(messages=[UserMessage(content="Explain Machine Learning to me in a nutshell.")])

tokens = tokenizer.encode_chat_completion(completion_request).tokens

out_tokens, _ = generate([tokens], model, max_tokens=64, temperature=0.0, eos_id=tokenizer.instruct_tokenizer.tokenizer.eos_id)

result = tokenizer.instruct_tokenizer.tokenizer.decode(out_tokens[0])

print(result)

```

### Function calling

```py

from mistral_common.protocol.instruct.tool_calls import Function, Tool

from mistral_inference.model import Transformer

from mistral_inference.generate import generate

from mistral_common.tokens.tokenizers.mistral import MistralTokenizer

from mistral_common.protocol.instruct.messages import UserMessage

from mistral_common.protocol.instruct.request import ChatCompletionRequest

tokenizer = MistralTokenizer.from_file(f"{mistral_models_path}/tokenizer.model.v3")

model = Transformer.from_folder(mistral_models_path)

completion_request = ChatCompletionRequest(

tools=[

Tool(

function=Function(

name="get_current_weather",

description="Get the current weather",

parameters={

"type": "object",

"properties": {

"location": {

"type": "string",

"description": "The city and state, e.g. San Francisco, CA",

},

"format": {

"type": "string",

"enum": ["celsius", "fahrenheit"],

"description": "The temperature unit to use. Infer this from the users location.",

},

},

"required": ["location", "format"],

},

)

)

],

messages=[

UserMessage(content="What's the weather like today in Paris?"),

],

)

tokens = tokenizer.encode_chat_completion(completion_request).tokens

out_tokens, _ = generate([tokens], model, max_tokens=64, temperature=0.0, eos_id=tokenizer.instruct_tokenizer.tokenizer.eos_id)

result = tokenizer.instruct_tokenizer.tokenizer.decode(out_tokens[0])

print(result)

```

## Generate with `transformers`

If you want to use Hugging Face `transformers` to generate text, you can do something like this.

```py

from transformers import pipeline

messages = [

{"role": "system", "content": "You are a pirate chatbot who always responds in pirate speak!"},

{"role": "user", "content": "Who are you?"},

]

chatbot = pipeline("text-generation", model="mistralai/Mistral-7B-Instruct-v0.3")

chatbot(messages)

```

## Limitations

The Mistral 7B Instruct model is a quick demonstration that the base model can be easily fine-tuned to achieve compelling performance.

It does not have any moderation mechanisms. We're looking forward to engaging with the community on ways to

make the model finely respect guardrails, allowing for deployment in environments requiring moderated outputs.

## The Mistral AI Team

Albert Jiang, Alexandre Sablayrolles, Alexis Tacnet, Antoine Roux, Arthur Mensch, Audrey Herblin-Stoop, Baptiste Bout, Baudouin de Monicault, Blanche Savary, Bam4d, Caroline Feldman, Devendra Singh Chaplot, Diego de las Casas, Eleonore Arcelin, Emma Bou Hanna, Etienne Metzger, Gianna Lengyel, Guillaume Bour, Guillaume Lample, Harizo Rajaona, Jean-Malo Delignon, Jia Li, Justus Murke, Louis Martin, Louis Ternon, Lucile Saulnier, Lélio Renard Lavaud, Margaret Jennings, Marie Pellat, Marie Torelli, Marie-Anne Lachaux, Nicolas Schuhl, Patrick von Platen, Pierre Stock, Sandeep Subramanian, Sophia Yang, Szymon Antoniak, Teven Le Scao, Thibaut Lavril, Timothée Lacroix, Théophile Gervet, Thomas Wang, Valera Nemychnikova, William El Sayed, William Marshall |

lmsys/vicuna-7b-v1.5 | lmsys | "2024-03-13T02:01:41Z" | 431,698 | 259 | transformers | [

"transformers",

"pytorch",

"llama",

"text-generation",

"arxiv:2307.09288",

"arxiv:2306.05685",

"license:llama2",

"autotrain_compatible",

"text-generation-inference",

"region:us"

] | text-generation | "2023-07-29T04:42:33Z" | ---

inference: false

license: llama2

---

# Vicuna Model Card

## Model Details

Vicuna is a chat assistant trained by fine-tuning Llama 2 on user-shared conversations collected from ShareGPT.

- **Developed by:** [LMSYS](https://lmsys.org/)

- **Model type:** An auto-regressive language model based on the transformer architecture

- **License:** Llama 2 Community License Agreement

- **Finetuned from model:** [Llama 2](https://arxiv.org/abs/2307.09288)

### Model Sources

- **Repository:** https://github.com/lm-sys/FastChat

- **Blog:** https://lmsys.org/blog/2023-03-30-vicuna/

- **Paper:** https://arxiv.org/abs/2306.05685

- **Demo:** https://chat.lmsys.org/

## Uses

The primary use of Vicuna is research on large language models and chatbots.

The primary intended users of the model are researchers and hobbyists in natural language processing, machine learning, and artificial intelligence.

## How to Get Started with the Model

- Command line interface: https://github.com/lm-sys/FastChat#vicuna-weights

- APIs (OpenAI API, Huggingface API): https://github.com/lm-sys/FastChat/tree/main#api

## Training Details

Vicuna v1.5 is fine-tuned from Llama 2 with supervised instruction fine-tuning.

The training data is around 125K conversations collected from ShareGPT.com.

See more details in the "Training Details of Vicuna Models" section in the appendix of this [paper](https://arxiv.org/pdf/2306.05685.pdf).

## Evaluation

Vicuna is evaluated with standard benchmarks, human preference, and LLM-as-a-judge. See more details in this [paper](https://arxiv.org/pdf/2306.05685.pdf) and [leaderboard](https://huggingface.co/spaces/lmsys/chatbot-arena-leaderboard).

## Difference between different versions of Vicuna

See [vicuna_weights_version.md](https://github.com/lm-sys/FastChat/blob/main/docs/vicuna_weights_version.md) |

microsoft/phi-2 | microsoft | "2024-04-29T16:25:56Z" | 428,611 | 3,199 | transformers | [

"transformers",

"safetensors",

"phi",

"text-generation",

"nlp",

"code",

"en",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | "2023-12-13T21:19:59Z" | ---

license: mit

license_link: https://huggingface.co/microsoft/phi-2/resolve/main/LICENSE

language:

- en

pipeline_tag: text-generation

tags:

- nlp

- code

---

## Model Summary

Phi-2 is a Transformer with **2.7 billion** parameters. It was trained using the same data sources as [Phi-1.5](https://huggingface.co/microsoft/phi-1.5), augmented with a new data source that consists of various NLP synthetic texts and filtered websites (for safety and educational value). When assessed against benchmarks testing common sense, language understanding, and logical reasoning, Phi-2 showcased a nearly state-of-the-art performance among models with less than 13 billion parameters.

Our model hasn't been fine-tuned through reinforcement learning from human feedback. The intention behind crafting this open-source model is to provide the research community with a non-restricted small model to explore vital safety challenges, such as reducing toxicity, understanding societal biases, enhancing controllability, and more.

## How to Use

Phi-2 has been integrated in the `transformers` version 4.37.0, please ensure that you are using a version equal or higher than it.

Phi-2 is known for having an attention overflow issue (with FP16). If you are facing this issue, please enable/disable autocast on the [PhiAttention.forward()](https://github.com/huggingface/transformers/blob/main/src/transformers/models/phi/modeling_phi.py#L306) function.

## Intended Uses

Given the nature of the training data, the Phi-2 model is best suited for prompts using the QA format, the chat format, and the code format.

### QA Format:

You can provide the prompt as a standalone question as follows:

```markdown

Write a detailed analogy between mathematics and a lighthouse.

```

where the model generates the text after "." .

To encourage the model to write more concise answers, you can also try the following QA format using "Instruct: \<prompt\>\nOutput:"

```markdown

Instruct: Write a detailed analogy between mathematics and a lighthouse.

Output: Mathematics is like a lighthouse. Just as a lighthouse guides ships safely to shore, mathematics provides a guiding light in the world of numbers and logic. It helps us navigate through complex problems and find solutions. Just as a lighthouse emits a steady beam of light, mathematics provides a consistent framework for reasoning and problem-solving. It illuminates the path to understanding and helps us make sense of the world around us.

```

where the model generates the text after "Output:".

### Chat Format:

```markdown

Alice: I don't know why, I'm struggling to maintain focus while studying. Any suggestions?

Bob: Well, have you tried creating a study schedule and sticking to it?

Alice: Yes, I have, but it doesn't seem to help much.

Bob: Hmm, maybe you should try studying in a quiet environment, like the library.

Alice: ...

```

where the model generates the text after the first "Bob:".

### Code Format:

```python

def print_prime(n):

"""

Print all primes between 1 and n

"""

primes = []

for num in range(2, n+1):

is_prime = True

for i in range(2, int(math.sqrt(num))+1):

if num % i == 0:

is_prime = False

break

if is_prime:

primes.append(num)

print(primes)

```

where the model generates the text after the comments.

**Notes:**

* Phi-2 is intended for QA, chat, and code purposes. The model-generated text/code should be treated as a starting point rather than a definitive solution for potential use cases. Users should be cautious when employing these models in their applications.

* Direct adoption for production tasks without evaluation is out of scope of this project. As a result, the Phi-2 model has not been tested to ensure that it performs adequately for any production-level application. Please refer to the limitation sections of this document for more details.

* If you are using `transformers<4.37.0`, always load the model with `trust_remote_code=True` to prevent side-effects.

## Sample Code

```python

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer

torch.set_default_device("cuda")

model = AutoModelForCausalLM.from_pretrained("microsoft/phi-2", torch_dtype="auto", trust_remote_code=True)

tokenizer = AutoTokenizer.from_pretrained("microsoft/phi-2", trust_remote_code=True)

inputs = tokenizer('''def print_prime(n):

"""

Print all primes between 1 and n

"""''', return_tensors="pt", return_attention_mask=False)

outputs = model.generate(**inputs, max_length=200)

text = tokenizer.batch_decode(outputs)[0]

print(text)

```

## Limitations of Phi-2

* Generate Inaccurate Code and Facts: The model may produce incorrect code snippets and statements. Users should treat these outputs as suggestions or starting points, not as definitive or accurate solutions.

* Limited Scope for code: Majority of Phi-2 training data is based in Python and use common packages such as "typing, math, random, collections, datetime, itertools". If the model generates Python scripts that utilize other packages or scripts in other languages, we strongly recommend users manually verify all API uses.

* Unreliable Responses to Instruction: The model has not undergone instruction fine-tuning. As a result, it may struggle or fail to adhere to intricate or nuanced instructions provided by users.

* Language Limitations: The model is primarily designed to understand standard English. Informal English, slang, or any other languages might pose challenges to its comprehension, leading to potential misinterpretations or errors in response.

* Potential Societal Biases: Phi-2 is not entirely free from societal biases despite efforts in assuring training data safety. There's a possibility it may generate content that mirrors these societal biases, particularly if prompted or instructed to do so. We urge users to be aware of this and to exercise caution and critical thinking when interpreting model outputs.

* Toxicity: Despite being trained with carefully selected data, the model can still produce harmful content if explicitly prompted or instructed to do so. We chose to release the model to help the open-source community develop the most effective ways to reduce the toxicity of a model directly after pretraining.

* Verbosity: Phi-2 being a base model often produces irrelevant or extra text and responses following its first answer to user prompts within a single turn. This is due to its training dataset being primarily textbooks, which results in textbook-like responses.

## Training

### Model

* Architecture: a Transformer-based model with next-word prediction objective

* Context length: 2048 tokens

* Dataset size: 250B tokens, combination of NLP synthetic data created by AOAI GPT-3.5 and filtered web data from Falcon RefinedWeb and SlimPajama, which was assessed by AOAI GPT-4.

* Training tokens: 1.4T tokens

* GPUs: 96xA100-80G

* Training time: 14 days

### Software

* [PyTorch](https://github.com/pytorch/pytorch)

* [DeepSpeed](https://github.com/microsoft/DeepSpeed)

* [Flash-Attention](https://github.com/HazyResearch/flash-attention)

### License

The model is licensed under the [MIT license](https://huggingface.co/microsoft/phi-2/resolve/main/LICENSE).

## Trademarks

This project may contain trademarks or logos for projects, products, or services. Authorized use of Microsoft trademarks or logos is subject to and must follow [Microsoft’s Trademark & Brand Guidelines](https://www.microsoft.com/en-us/legal/intellectualproperty/trademarks). Use of Microsoft trademarks or logos in modified versions of this project must not cause confusion or imply Microsoft sponsorship. Any use of third-party trademarks or logos are subject to those third-party’s policies. |

sentence-transformers/distilbert-multilingual-nli-stsb-quora-ranking | sentence-transformers | "2024-03-27T10:23:51Z" | 425,508 | 5 | sentence-transformers | [

"sentence-transformers",

"pytorch",

"tf",

"safetensors",

"distilbert",

"feature-extraction",

"sentence-similarity",

"transformers",

"arxiv:1908.10084",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | sentence-similarity | "2022-03-02T23:29:05Z" | ---

license: apache-2.0

library_name: sentence-transformers

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- transformers

pipeline_tag: sentence-similarity

---

# sentence-transformers/distilbert-multilingual-nli-stsb-quora-ranking

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search.

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('sentence-transformers/distilbert-multilingual-nli-stsb-quora-ranking')

embeddings = model.encode(sentences)

print(embeddings)

```

## Usage (HuggingFace Transformers)

Without [sentence-transformers](https://www.SBERT.net), you can use the model like this: First, you pass your input through the transformer model, then you have to apply the right pooling-operation on-top of the contextualized word embeddings.

```python

from transformers import AutoTokenizer, AutoModel

import torch

#Mean Pooling - Take attention mask into account for correct averaging

def mean_pooling(model_output, attention_mask):

token_embeddings = model_output[0] #First element of model_output contains all token embeddings

input_mask_expanded = attention_mask.unsqueeze(-1).expand(token_embeddings.size()).float()

return torch.sum(token_embeddings * input_mask_expanded, 1) / torch.clamp(input_mask_expanded.sum(1), min=1e-9)

# Sentences we want sentence embeddings for

sentences = ['This is an example sentence', 'Each sentence is converted']

# Load model from HuggingFace Hub

tokenizer = AutoTokenizer.from_pretrained('sentence-transformers/distilbert-multilingual-nli-stsb-quora-ranking')

model = AutoModel.from_pretrained('sentence-transformers/distilbert-multilingual-nli-stsb-quora-ranking')

# Tokenize sentences

encoded_input = tokenizer(sentences, padding=True, truncation=True, return_tensors='pt')

# Compute token embeddings

with torch.no_grad():

model_output = model(**encoded_input)

# Perform pooling. In this case, max pooling.

sentence_embeddings = mean_pooling(model_output, encoded_input['attention_mask'])

print("Sentence embeddings:")

print(sentence_embeddings)

```

## Evaluation Results

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=sentence-transformers/distilbert-multilingual-nli-stsb-quora-ranking)

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 128, 'do_lower_case': False}) with Transformer model: DistilBertModel

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

)

```

## Citing & Authors

This model was trained by [sentence-transformers](https://www.sbert.net/).

If you find this model helpful, feel free to cite our publication [Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks](https://arxiv.org/abs/1908.10084):

```bibtex

@inproceedings{reimers-2019-sentence-bert,

title = "Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks",

author = "Reimers, Nils and Gurevych, Iryna",

booktitle = "Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing",

month = "11",

year = "2019",

publisher = "Association for Computational Linguistics",

url = "http://arxiv.org/abs/1908.10084",

}

``` |

Hate-speech-CNERG/english-abusive-MuRIL | Hate-speech-CNERG | "2023-03-19T13:28:03Z" | 421,808 | 5 | transformers | [

"transformers",

"pytorch",

"safetensors",

"bert",

"text-classification",

"en",

"arxiv:2204.12543",

"license:afl-3.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | "2022-04-26T19:19:05Z" | ---

language: en

license: afl-3.0

---

This model is used detecting **abusive speech** in **English**. It is finetuned on MuRIL model using English abusive speech dataset.

The model is trained with learning rates of 2e-5. Training code can be found at this [url](https://github.com/hate-alert/IndicAbusive)

LABEL_0 :-> Normal

LABEL_1 :-> Abusive

### For more details about our paper

Mithun Das, Somnath Banerjee and Animesh Mukherjee. "[Data Bootstrapping Approaches to Improve Low Resource Abusive Language Detection for Indic Languages](https://arxiv.org/abs/2204.12543)". Accepted at ACM HT 2022.

***Please cite our paper in any published work that uses any of these resources.***

~~~

@article{das2022data,

title={Data Bootstrapping Approaches to Improve Low Resource Abusive Language Detection for Indic Languages},

author={Das, Mithun and Banerjee, Somnath and Mukherjee, Animesh},

journal={arXiv preprint arXiv:2204.12543},

year={2022}

}

~~~ |

apple/DFN5B-CLIP-ViT-H-14-378 | apple | "2023-10-31T18:02:40Z" | 418,548 | 38 | open_clip | [

"open_clip",

"pytorch",

"clip",

"arxiv:2309.17425",

"license:other",

"region:us"

] | null | "2023-10-30T23:08:21Z" | ---

license: other

license_name: apple-sample-code-license

license_link: LICENSE

---

A CLIP (Contrastive Language-Image Pre-training) model trained on DFN-5B.

Data Filtering Networks (DFNs) are small networks used to automatically filter large pools of uncurated data.

This model was trained on 5B images that were filtered from a pool of 43B uncurated image-text pairs

(12.8B image-text pairs from CommonPool-12.8B + 30B additional public image-text pairs).

This model has been converted to PyTorch from the original JAX checkpoints from Axlearn (https://github.com/apple/axlearn).

These weights are directly usable in OpenCLIP (image + text).

## Model Details

- **Model Type:** Contrastive Image-Text, Zero-Shot Image Classification.

- **Dataset:** DFN-5b

- **Papers:**

- Data Filtering Networks: https://arxiv.org/abs/2309.17425

- **Samples Seen:** 39B (224 x 224) + 5B (384 x 384)

## Model Metrics

| dataset | metric |

|:-----------------------|---------:|

| ImageNet 1k | 0.84218 |

| Caltech-101 | 0.954479 |

| CIFAR-10 | 0.9879 |

| CIFAR-100 | 0.9041 |

| CLEVR Counts | 0.362467 |

| CLEVR Distance | 0.206067 |

| Country211 | 0.37673 |

| Describable Textures | 0.71383 |

| EuroSAT | 0.608333 |

| FGVC Aircraft | 0.719938 |

| Food-101 | 0.963129 |

| GTSRB | 0.679018 |

| ImageNet Sketch | 0.73338 |

| ImageNet v2 | 0.7837 |

| ImageNet-A | 0.7992 |

| ImageNet-O | 0.3785 |

| ImageNet-R | 0.937633 |

| KITTI Vehicle Distance | 0.38256 |

| MNIST | 0.8372 |

| ObjectNet <sup>1</sup> | 0.796867 |

| Oxford Flowers-102 | 0.896834 |

| Oxford-IIIT Pet | 0.966841 |

| Pascal VOC 2007 | 0.826255 |

| PatchCamelyon | 0.695953 |

| Rendered SST2 | 0.566722 |

| RESISC45 | 0.755079 |

| Stanford Cars | 0.959955 |

| STL-10 | 0.991125 |

| SUN397 | 0.772799 |

| SVHN | 0.671251 |

| Flickr | 0.8808 |

| MSCOCO | 0.636889 |

| WinoGAViL | 0.571813 |

| iWildCam | 0.224911 |

| Camelyon17 | 0.711536 |

| FMoW | 0.209024 |

| Dollar Street | 0.71729 |

| GeoDE | 0.935699 |

| **Average** | **0.709421** |

[1]: Center-crop pre-processing used for ObjectNet (squashing results in lower accuracy of 0.737)

## Model Usage

### With OpenCLIP

```

import torch

import torch.nn.functional as F

from urllib.request import urlopen

from PIL import Image

from open_clip import create_model_from_pretrained, get_tokenizer

model, preprocess = create_model_from_pretrained('hf-hub:apple/DFN5B-CLIP-ViT-H-14-384')

tokenizer = get_tokenizer('ViT-H-14')

image = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

image = preprocess(image).unsqueeze(0)

labels_list = ["a dog", "a cat", "a donut", "a beignet"]

text = tokenizer(labels_list, context_length=model.context_length)

with torch.no_grad(), torch.cuda.amp.autocast():

image_features = model.encode_image(image)

text_features = model.encode_text(text)

image_features = F.normalize(image_features, dim=-1)

text_features = F.normalize(text_features, dim=-1)

text_probs = torch.sigmoid(image_features @ text_features.T * model.logit_scale.exp() + model.logit_bias)

zipped_list = list(zip(labels_list, [round(p.item(), 3) for p in text_probs[0]]))

print("Label probabilities: ", zipped_list)

```

## Citation

```bibtex

@article{fang2023data,

title={Data Filtering Networks},

author={Fang, Alex and Jose, Albin Madappally and Jain, Amit and Schmidt, Ludwig and Toshev, Alexander and Shankar, Vaishaal},

journal={arXiv preprint arXiv:2309.17425},

year={2023}

}

``` |

eugenesiow/edsr-base | eugenesiow | "2021-07-28T09:04:00Z" | 412,159 | 8 | transformers | [

"transformers",

"EDSR",

"super-image",

"image-super-resolution",

"dataset:eugenesiow/Div2k",

"dataset:eugenesiow/Set5",

"dataset:eugenesiow/Set14",

"dataset:eugenesiow/BSD100",

"dataset:eugenesiow/Urban100",

"arxiv:1707.02921",

"arxiv:2104.07566",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | null | "2022-03-02T23:29:05Z" | ---

license: apache-2.0

tags:

- super-image

- image-super-resolution

datasets:

- eugenesiow/Div2k

- eugenesiow/Set5

- eugenesiow/Set14

- eugenesiow/BSD100

- eugenesiow/Urban100

metrics:

- pnsr

- ssim

---

# Enhanced Deep Residual Networks for Single Image Super-Resolution (EDSR)

EDSR model pre-trained on DIV2K (800 images training, augmented to 4000 images, 100 images validation) for 2x, 3x and 4x image super resolution. It was introduced in the paper [Enhanced Deep Residual Networks for Single Image Super-Resolution](https://arxiv.org/abs/1707.02921) by Lim et al. (2017) and first released in [this repository](https://github.com/sanghyun-son/EDSR-PyTorch).

The goal of image super resolution is to restore a high resolution (HR) image from a single low resolution (LR) image. The image below shows the ground truth (HR), the bicubic upscaling x2 and EDSR upscaling x2.

## Model description

EDSR is a model that uses both deeper and wider architecture (32 ResBlocks and 256 channels) to improve performance. It uses both global and local skip connections, and up-scaling is done at the end of the network. It doesn't use batch normalization layers (input and output have similar distributions, normalizing intermediate features may not be desirable) instead it uses constant scaling layers to ensure stable training. An L1 loss function (absolute error) is used instead of L2 (MSE), the authors showed better performance empirically and it requires less computation.

This is a base model (~5mb vs ~100mb) that includes just 16 ResBlocks and 64 channels.

## Intended uses & limitations

You can use the pre-trained models for upscaling your images 2x, 3x and 4x. You can also use the trainer to train a model on your own dataset.

### How to use

The model can be used with the [super_image](https://github.com/eugenesiow/super-image) library:

```bash

pip install super-image

```

Here is how to use a pre-trained model to upscale your image:

```python

from super_image import EdsrModel, ImageLoader

from PIL import Image

import requests

url = 'https://paperswithcode.com/media/datasets/Set5-0000002728-07a9793f_zA3bDjj.jpg'

image = Image.open(requests.get(url, stream=True).raw)

model = EdsrModel.from_pretrained('eugenesiow/edsr-base', scale=2) # scale 2, 3 and 4 models available

inputs = ImageLoader.load_image(image)

preds = model(inputs)

ImageLoader.save_image(preds, './scaled_2x.png') # save the output 2x scaled image to `./scaled_2x.png`

ImageLoader.save_compare(inputs, preds, './scaled_2x_compare.png') # save an output comparing the super-image with a bicubic scaling

```

[](https://colab.research.google.com/github/eugenesiow/super-image-notebooks/blob/master/notebooks/Upscale_Images_with_Pretrained_super_image_Models.ipynb "Open in Colab")

## Training data

The models for 2x, 3x and 4x image super resolution were pretrained on [DIV2K](https://huggingface.co/datasets/eugenesiow/Div2k), a dataset of 800 high-quality (2K resolution) images for training, augmented to 4000 images and uses a dev set of 100 validation images (images numbered 801 to 900).

## Training procedure

### Preprocessing

We follow the pre-processing and training method of [Wang et al.](https://arxiv.org/abs/2104.07566).

Low Resolution (LR) images are created by using bicubic interpolation as the resizing method to reduce the size of the High Resolution (HR) images by x2, x3 and x4 times.

During training, RGB patches with size of 64×64 from the LR input are used together with their corresponding HR patches.

Data augmentation is applied to the training set in the pre-processing stage where five images are created from the four corners and center of the original image.

We need the huggingface [datasets](https://huggingface.co/datasets?filter=task_ids:other-other-image-super-resolution) library to download the data:

```bash

pip install datasets

```

The following code gets the data and preprocesses/augments the data.

```python

from datasets import load_dataset

from super_image.data import EvalDataset, TrainDataset, augment_five_crop

augmented_dataset = load_dataset('eugenesiow/Div2k', 'bicubic_x4', split='train')\

.map(augment_five_crop, batched=True, desc="Augmenting Dataset") # download and augment the data with the five_crop method

train_dataset = TrainDataset(augmented_dataset) # prepare the train dataset for loading PyTorch DataLoader

eval_dataset = EvalDataset(load_dataset('eugenesiow/Div2k', 'bicubic_x4', split='validation')) # prepare the eval dataset for the PyTorch DataLoader

```

### Pretraining

The model was trained on GPU. The training code is provided below:

```python

from super_image import Trainer, TrainingArguments, EdsrModel, EdsrConfig

training_args = TrainingArguments(

output_dir='./results', # output directory

num_train_epochs=1000, # total number of training epochs

)

config = EdsrConfig(

scale=4, # train a model to upscale 4x

)

model = EdsrModel(config)

trainer = Trainer(

model=model, # the instantiated model to be trained

args=training_args, # training arguments, defined above

train_dataset=train_dataset, # training dataset

eval_dataset=eval_dataset # evaluation dataset

)

trainer.train()

```

[](https://colab.research.google.com/github/eugenesiow/super-image-notebooks/blob/master/notebooks/Train_super_image_Models.ipynb "Open in Colab")

## Evaluation results

The evaluation metrics include [PSNR](https://en.wikipedia.org/wiki/Peak_signal-to-noise_ratio#Quality_estimation_with_PSNR) and [SSIM](https://en.wikipedia.org/wiki/Structural_similarity#Algorithm).

Evaluation datasets include:

- Set5 - [Bevilacqua et al. (2012)](https://huggingface.co/datasets/eugenesiow/Set5)

- Set14 - [Zeyde et al. (2010)](https://huggingface.co/datasets/eugenesiow/Set14)

- BSD100 - [Martin et al. (2001)](https://huggingface.co/datasets/eugenesiow/BSD100)

- Urban100 - [Huang et al. (2015)](https://huggingface.co/datasets/eugenesiow/Urban100)

The results columns below are represented below as `PSNR/SSIM`. They are compared against a Bicubic baseline.

|Dataset |Scale |Bicubic |edsr-base |

|--- |--- |--- |--- |

|Set5 |2x |33.64/0.9292 |**38.02/0.9607** |

|Set5 |3x |30.39/0.8678 |**35.04/0.9403** |

|Set5 |4x |28.42/0.8101 |**32.12/0.8947** |

|Set14 |2x |30.22/0.8683 |**33.57/0.9172** |

|Set14 |3x |27.53/0.7737 |**30.93/0.8567** |

|Set14 |4x |25.99/0.7023 |**28.60/0.7815** |

|BSD100 |2x |29.55/0.8425 |**32.21/0.8999** |

|BSD100 |3x |27.20/0.7382 |**29.65/0.8204** |

|BSD100 |4x |25.96/0.6672 |**27.61/0.7363** |

|Urban100 |2x |26.66/0.8408 |**32.04/0.9276** |

|Urban100 |3x | |**29.23/0.8723** |

|Urban100 |4x |23.14/0.6573 |**26.02/0.7832** |

You can find a notebook to easily run evaluation on pretrained models below:

[](https://colab.research.google.com/github/eugenesiow/super-image-notebooks/blob/master/notebooks/Evaluate_Pretrained_super_image_Models.ipynb "Open in Colab")

## BibTeX entry and citation info

```bibtex

@InProceedings{Lim_2017_CVPR_Workshops,

author = {Lim, Bee and Son, Sanghyun and Kim, Heewon and Nah, Seungjun and Lee, Kyoung Mu},

title = {Enhanced Deep Residual Networks for Single Image Super-Resolution},

booktitle = {The IEEE Conference on Computer Vision and Pattern Recognition (CVPR) Workshops},

month = {July},

year = {2017}

}

``` |

trpakov/vit-face-expression | trpakov | "2023-12-30T14:38:39Z" | 411,431 | 27 | transformers | [

"transformers",

"pytorch",

"onnx",

"safetensors",

"vit",

"image-classification",

"doi:10.57967/hf/2289",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | image-classification | "2022-11-09T12:50:30Z" | ---

{}

---

# Vision Transformer (ViT) for Facial Expression Recognition Model Card

## Model Overview

- **Model Name:** [trpakov/vit-face-expression](https://huggingface.co/trpakov/vit-face-expression)

- **Task:** Facial Expression/Emotion Recognition

- **Dataset:** [FER2013](https://www.kaggle.com/datasets/msambare/fer2013)

- **Model Architecture:** [Vision Transformer (ViT)](https://huggingface.co/docs/transformers/model_doc/vit)

- **Finetuned from model:** [vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k)

## Model Description

The vit-face-expression model is a Vision Transformer fine-tuned for the task of facial emotion recognition.

It is trained on the FER2013 dataset, which consists of facial images categorized into seven different emotions:

- Angry

- Disgust

- Fear

- Happy

- Sad

- Surprise

- Neutral

## Data Preprocessing

The input images are preprocessed before being fed into the model. The preprocessing steps include:

- **Resizing:** Images are resized to the specified input size.

- **Normalization:** Pixel values are normalized to a specific range.

- **Data Augmentation:** Random transformations such as rotations, flips, and zooms are applied to augment the training dataset.

## Evaluation Metrics

- **Validation set accuracy:** 0.7113

- **Test set accuracy:** 0.7116

## Limitations

- **Data Bias:** The model's performance may be influenced by biases present in the training data.

- **Generalization:** The model's ability to generalize to unseen data is subject to the diversity of the training dataset. |

cledoux42/Ethnicity_Test_v003 | cledoux42 | "2023-04-09T04:48:14Z" | 410,617 | 5 | transformers | [

"transformers",

"pytorch",

"vit",

"image-classification",

"autotrain",

"vision",

"dataset:cledoux42/autotrain-data-ethnicity-test_v003",

"co2_eq_emissions",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | image-classification | "2023-04-09T04:32:22Z" | ---

tags:

- autotrain

- vision

- image-classification

datasets:

- cledoux42/autotrain-data-ethnicity-test_v003

widget:

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/tiger.jpg

example_title: Tiger

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/teapot.jpg

example_title: Teapot

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/palace.jpg

example_title: Palace

co2_eq_emissions:

emissions: 6.022813032092885

---

# Model Trained Using AutoTrain

- Problem type: Multi-class Classification

- Model ID: 47959117029

- CO2 Emissions (in grams): 6.0228

## Validation Metrics

- Loss: 0.530

- Accuracy: 0.796

- Macro F1: 0.797

- Micro F1: 0.796

- Weighted F1: 0.796

- Macro Precision: 0.797

- Micro Precision: 0.796

- Weighted Precision: 0.796

- Macro Recall: 0.798

- Micro Recall: 0.796

- Weighted Recall: 0.796 |

hatmimoha/arabic-ner | hatmimoha | "2023-11-13T10:53:17Z" | 407,021 | 14 | transformers | [

"transformers",

"pytorch",

"tf",

"jax",

"safetensors",

"bert",

"token-classification",

"ar",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | token-classification | "2022-03-02T23:29:05Z" | ---

language: ar

---

# Arabic Named Entity Recognition Model

Pretrained BERT-based ([arabic-bert-base](https://huggingface.co/asafaya/bert-base-arabic)) Named Entity Recognition model for Arabic.

The pre-trained model can recognize the following entities:

1. **PERSON**

- و هذا ما نفاه المعاون السياسي للرئيس ***نبيه بري*** ، النائب ***علي حسن خليل***

- لكن أوساط ***الحريري*** تعتبر أنه ضحى كثيرا في سبيل البلد

- و ستفقد الملكة ***إليزابيث الثانية*** بذلك سيادتها على واحدة من آخر ممالك الكومنولث

2. **ORGANIZATION**

- حسب أرقام ***البنك الدولي***

- أعلن ***الجيش العراقي***

- و نقلت وكالة ***رويترز*** عن ثلاثة دبلوماسيين في ***الاتحاد الأوروبي*** ، أن ***بلجيكا*** و ***إيرلندا*** و ***لوكسمبورغ*** تريد أيضاً مناقشة

- ***الحكومة الاتحادية*** و ***حكومة إقليم كردستان***

- و هو ما يثير الشكوك حول مشاركة النجم البرتغالي في المباراة المرتقبة أمام ***برشلونة*** الإسباني في

3. ***LOCATION***

- الجديد هو تمكين اللاجئين من “ مغادرة الجزيرة تدريجياً و بهدوء إلى ***أثينا*** ”

- ***جزيرة ساكيز*** تبعد 1 كم عن ***إزمير***

4. **DATE**

- ***غدا الجمعة***

- ***06 أكتوبر 2020***

- ***العام السابق***

5. **PRODUCT**

- عبر حسابه ب ***تطبيق “ إنستغرام ”***

- الجيل الثاني من ***نظارة الواقع الافتراضي أوكولوس كويست*** تحت اسم " ***أوكولوس كويست 2*** "

6. **COMPETITION**

- عدم المشاركة في ***بطولة فرنسا المفتوحة للتنس***

- في مباراة ***كأس السوبر الأوروبي***

7. **PRIZE**

- ***جائزة نوبل ل لآداب***

- الذي فاز ب ***جائزة “ إيمي ” لأفضل دور مساند***

8. **EVENT**

- تسجّل أغنية جديدة خاصة ب ***العيد الوطني السعودي***

- ***مهرجان المرأة يافوية*** في دورته الرابعة

9. **DISEASE**

- في مكافحة فيروس ***كورونا*** و عدد من الأمراض

- الأزمات المشابهة مثل “ ***انفلونزا الطيور*** ” و ” ***انفلونزا الخنازير***

## Example

[Find here a complete example to use this model](https://github.com/hatmimoha/arabic-ner)

## Training Corpus

The training corpus is made of 378.000 tokens (14.000 sentences) collected from the Web and annotated manually.

## Results

The results on a valid corpus made of 30.000 tokens shows an F-measure of ~87%.

|

facebook/detr-resnet-50 | facebook | "2024-04-10T13:56:31Z" | 406,564 | 576 | transformers | [

"transformers",

"pytorch",

"safetensors",

"detr",

"object-detection",

"vision",

"dataset:coco",

"arxiv:2005.12872",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | object-detection | "2022-03-02T23:29:05Z" | ---

license: apache-2.0

tags:

- object-detection

- vision

datasets:

- coco

widget:

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/savanna.jpg

example_title: Savanna

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/football-match.jpg

example_title: Football Match

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/airport.jpg

example_title: Airport

---

# DETR (End-to-End Object Detection) model with ResNet-50 backbone

DEtection TRansformer (DETR) model trained end-to-end on COCO 2017 object detection (118k annotated images). It was introduced in the paper [End-to-End Object Detection with Transformers](https://arxiv.org/abs/2005.12872) by Carion et al. and first released in [this repository](https://github.com/facebookresearch/detr).

Disclaimer: The team releasing DETR did not write a model card for this model so this model card has been written by the Hugging Face team.

## Model description

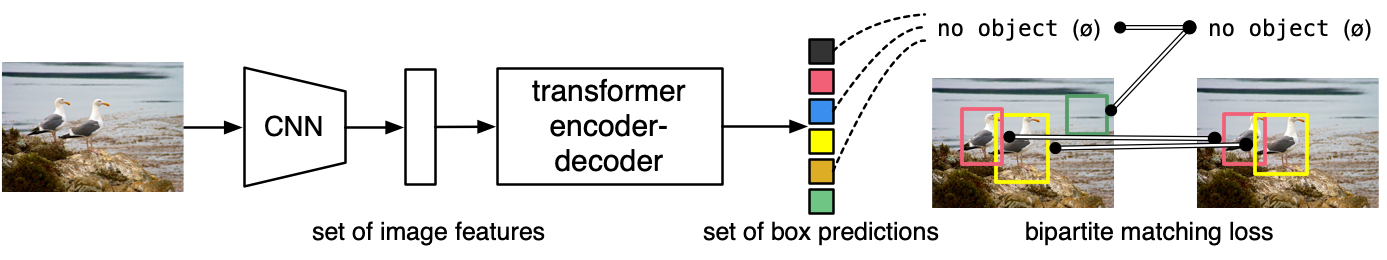

The DETR model is an encoder-decoder transformer with a convolutional backbone. Two heads are added on top of the decoder outputs in order to perform object detection: a linear layer for the class labels and a MLP (multi-layer perceptron) for the bounding boxes. The model uses so-called object queries to detect objects in an image. Each object query looks for a particular object in the image. For COCO, the number of object queries is set to 100.

The model is trained using a "bipartite matching loss": one compares the predicted classes + bounding boxes of each of the N = 100 object queries to the ground truth annotations, padded up to the same length N (so if an image only contains 4 objects, 96 annotations will just have a "no object" as class and "no bounding box" as bounding box). The Hungarian matching algorithm is used to create an optimal one-to-one mapping between each of the N queries and each of the N annotations. Next, standard cross-entropy (for the classes) and a linear combination of the L1 and generalized IoU loss (for the bounding boxes) are used to optimize the parameters of the model.

## Intended uses & limitations

You can use the raw model for object detection. See the [model hub](https://huggingface.co/models?search=facebook/detr) to look for all available DETR models.

### How to use

Here is how to use this model:

```python

from transformers import DetrImageProcessor, DetrForObjectDetection

import torch

from PIL import Image

import requests

url = "http://images.cocodataset.org/val2017/000000039769.jpg"

image = Image.open(requests.get(url, stream=True).raw)

# you can specify the revision tag if you don't want the timm dependency

processor = DetrImageProcessor.from_pretrained("facebook/detr-resnet-50", revision="no_timm")

model = DetrForObjectDetection.from_pretrained("facebook/detr-resnet-50", revision="no_timm")

inputs = processor(images=image, return_tensors="pt")

outputs = model(**inputs)

# convert outputs (bounding boxes and class logits) to COCO API

# let's only keep detections with score > 0.9

target_sizes = torch.tensor([image.size[::-1]])

results = processor.post_process_object_detection(outputs, target_sizes=target_sizes, threshold=0.9)[0]

for score, label, box in zip(results["scores"], results["labels"], results["boxes"]):

box = [round(i, 2) for i in box.tolist()]

print(

f"Detected {model.config.id2label[label.item()]} with confidence "

f"{round(score.item(), 3)} at location {box}"

)

```

This should output:

```

Detected remote with confidence 0.998 at location [40.16, 70.81, 175.55, 117.98]

Detected remote with confidence 0.996 at location [333.24, 72.55, 368.33, 187.66]

Detected couch with confidence 0.995 at location [-0.02, 1.15, 639.73, 473.76]

Detected cat with confidence 0.999 at location [13.24, 52.05, 314.02, 470.93]

Detected cat with confidence 0.999 at location [345.4, 23.85, 640.37, 368.72]

```

Currently, both the feature extractor and model support PyTorch.

## Training data

The DETR model was trained on [COCO 2017 object detection](https://cocodataset.org/#download), a dataset consisting of 118k/5k annotated images for training/validation respectively.

## Training procedure

### Preprocessing

The exact details of preprocessing of images during training/validation can be found [here](https://github.com/google-research/vision_transformer/blob/master/vit_jax/input_pipeline.py).

Images are resized/rescaled such that the shortest side is at least 800 pixels and the largest side at most 1333 pixels, and normalized across the RGB channels with the ImageNet mean (0.485, 0.456, 0.406) and standard deviation (0.229, 0.224, 0.225).

### Training

The model was trained for 300 epochs on 16 V100 GPUs. This takes 3 days, with 4 images per GPU (hence a total batch size of 64).

## Evaluation results

This model achieves an AP (average precision) of **42.0** on COCO 2017 validation. For more details regarding evaluation results, we refer to table 1 of the original paper.

### BibTeX entry and citation info

```bibtex

@article{DBLP:journals/corr/abs-2005-12872,

author = {Nicolas Carion and

Francisco Massa and

Gabriel Synnaeve and

Nicolas Usunier and

Alexander Kirillov and

Sergey Zagoruyko},

title = {End-to-End Object Detection with Transformers},

journal = {CoRR},

volume = {abs/2005.12872},

year = {2020},

url = {https://arxiv.org/abs/2005.12872},

archivePrefix = {arXiv},

eprint = {2005.12872},

timestamp = {Thu, 28 May 2020 17:38:09 +0200},

biburl = {https://dblp.org/rec/journals/corr/abs-2005-12872.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}

``` |

zhihan1996/DNA_bert_6 | zhihan1996 | "2023-10-30T19:26:08Z" | 406,458 | 18 | transformers | [

"transformers",

"pytorch",

"bert",

"fill-mask",

"custom_code",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | fill-mask | "2022-03-02T23:29:05Z" | Entry not found |

UrukHan/t5-russian-spell | UrukHan | "2023-04-05T10:13:50Z" | 405,137 | 29 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"safetensors",

"t5",

"text2text-generation",

"generated_from_trainer",

"dataset:UrukHan/wav2vec2-russian",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text2text-generation | "2022-03-29T14:20:26Z" | ---

tags:

- generated_from_trainer

datasets: UrukHan/wav2vec2-russian

widget:

- text: ывсем привет выныканалетоп армии и это двадцать пятый день спец операций на

украине ет самый главной новости российские военные ракетами кинжалы калибр уничтожили

крупную военную топливную базу украины ракетным ударом по населенному пункту под

жетамиром уничтжены более стаукраинских военных в две тысячи двадцать втором году

model-index:

- name: t5-russian-spell

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

---

# t5-russian-spell

---

модель для исправление текста из распознаного аудио. моя модлеь для распознования аудио https://huggingface.co/UrukHan/wav2vec2-russian и его результаты можно закидывать в эту модель. тестил на видео случайном с ютюба

<table border="0">

<tr>

<td><b style="font-size:30px">Output wav2vec2</b></td>

<td><b style="font-size:30px">Output spell correcor</b></td>

</tr>

<tr>

<td>ывсем привет выныканалетоп армии и это двадцать пятый день спец операций на украине ет самый главной новости российские военные ракетами кинжалы калибр уничтожили крупную военную топливную базу украины ракетным ударом по населенному пункту под жетамиром уничтжены более стаукраинских военных в две тысячи двадцать втором году</td>

<td>Всем привет! Вы в курсе новостей от армии. И это 25 день спецопераций на Украине. Есть самые главные новости. Российские военные ракетами «Кинжалы» и «Кинжалы» калибра уничтожили крупную военную топливную базу Украины. Ракетным ударом по населенному пункту под Жетамиром уничтожены более ста украинских военных в 2022г.</td>

</tr>

</table>

#

---

Датасеты для обучения:

UrukHan/t5-russian-spell_I : https://huggingface.co/datasets/UrukHan/t5-russian-spell_I

UrukHan/t5-russian-spell_II : https://huggingface.co/datasets/UrukHan/t5-russian-spell_II

UrukHan/t5-russian-spell_III : https://huggingface.co/datasets/UrukHan/t5-russian-spell_III

---

# Запуск на вывод результатов пример работы с комментариями в колабе https://colab.research.google.com/drive/1ame2va9_NflYqy4RZ07HYmQ0moJYy7w2?usp=sharing :

#

```python

# Установим библиотеку трансформеров

!pip install transformers

# Импортируем библиотеки

from transformers import AutoModelForSeq2SeqLM, T5TokenizerFast

# Зададим название выбронной модели из хаба

MODEL_NAME = 'UrukHan/t5-russian-spell'

MAX_INPUT = 256

# Загрузка модели и токенизатора

tokenizer = T5TokenizerFast.from_pretrained(MODEL_NAME)

model = AutoModelForSeq2SeqLM.from_pretrained(MODEL_NAME)

# Входные данные (можно массив фраз или текст)

input_sequences = ['сеглдыя хорош ден', 'когд а вы прдет к нам в госи'] # или можно использовать одиночные фразы: input_sequences = 'сеглдыя хорош ден'

task_prefix = "Spell correct: " # Токенизирование данных

if type(input_sequences) != list: input_sequences = [input_sequences]

encoded = tokenizer(

[task_prefix + sequence for sequence in input_sequences],

padding="longest",

max_length=MAX_INPUT,

truncation=True,

return_tensors="pt",

)

predicts = model.generate(encoded) # # Прогнозирование

tokenizer.batch_decode(predicts, skip_special_tokens=True) # Декодируем данные

```

#

---

#Настроенный блокнот для запуска обучения и сохранения модели в свой репозиторий на huggingface hub:

#https://colab.research.google.com/drive/1H4IoasDqa2TEjGivVDp-4Pdpm0oxrCWd?usp=sharing

#

```python

# Установка библиотек

!pip install datasets

!apt install git-lfs

!pip install transformers

!pip install sentencepiece

!pip install rouge_score

# Импорт библиотек

import numpy as np

from datasets import Dataset

import tensorflow as

import nltk

from transformers import T5TokenizerFast, Seq2SeqTrainingArguments, Seq2SeqTrainer, AutoModelForSeq2SeqLM, DataCollatorForSeq2Seq

import torch

from transformers.optimization import Adafactor, AdafactorSchedule

from datasets import load_dataset, load_metric

# загрузка параметров

raw_datasets = load_dataset("xsum")

metric = load_metric("rouge")

nltk.download('punkt')

# Ввести свой ключ huggingface hyb

from huggingface_hub import notebook_login

notebook_login()

# Определение параметров

REPO = "t5-russian-spell" # Введите наазвание название репозитория

MODEL_NAME = "UrukHan/t5-russian-spell" # Введите наазвание выбранной модели из хаба

MAX_INPUT = 256 # Введите максимальную длинну входных данных в токенах (длинна входных фраз в словах (можно считать полслова токен))

MAX_OUTPUT = 256 # Введите максимальную длинну прогнозов в токенах (можно уменьшить для задач суммризации или других задач где выход короче)

BATCH_SIZE = 8

DATASET = 'UrukHan/t5-russian-spell_I' # Введите наазвание название датасета

# Загрузка датасета использование других типов данных опишу ниже

data = load_dataset(DATASET)

# Загрузка модели и токенизатора

tokenizer = T5TokenizerFast.from_pretrained(MODEL_NAME)

model = AutoModelForSeq2SeqLM.from_pretrained(MODEL_NAME)

model.config.max_length = MAX_OUTPUT # по умолчанию 20, поэтому во всех моделях прогнозы обрезаются выходные последовательности

# Закоментить после первого соъранения в репозиторий свой необъязательно

tokenizer.push_to_hub(repo_name)

train = data['train']

test = data['test'].train_test_split(0.02)['test'] # Уменьшил так тестовыу. выборку чтоб не ждать долго расчет ошибок между эпохами

data_collator = DataCollatorForSeq2Seq(tokenizer, model=model) #return_tensors="tf"

def compute_metrics(eval_pred):

predictions, labels = eval_pred

decoded_preds = tokenizer.batch_decode(predictions, skip_special_tokens=True)

# Replace -100 in the labels as we can't decode them.

labels = np.where(labels != -100, labels, tokenizer.pad_token_id)

decoded_labels = tokenizer.batch_decode(labels, skip_special_tokens=True)

# Rouge expects a newline after each sentence

decoded_preds = ["\n".join(nltk.sent_tokenize(pred.strip())) for pred in decoded_preds]

decoded_labels = ["\n".join(nltk.sent_tokenize(label.strip())) for label in decoded_labels]

result = metric.compute(predictions=decoded_preds, references=decoded_labels, use_stemmer=True)

# Extract a few results

result = {key: value.mid.fmeasure * 100 for key, value in result.items()}

# Add mean generated length

prediction_lens = [np.count_nonzero(pred != tokenizer.pad_token_id) for pred in predictions]

result["gen_len"] = np.mean(prediction_lens)

return {k: round(v, 4) for k, v in result.items()}

training_args = Seq2SeqTrainingArguments(

output_dir = REPO,

#overwrite_output_dir=True,

evaluation_strategy='steps',

#learning_rate=2e-5,

eval_steps=5000,

save_steps=5000,

num_train_epochs=1,

predict_with_generate=True,

per_device_train_batch_size=BATCH_SIZE,

per_device_eval_batch_size=BATCH_SIZE,

fp16=True,

save_total_limit=2,

#generation_max_length=256,

#generation_num_beams=4,

weight_decay=0.005,

#logging_dir='logs',

push_to_hub=True,

)

# Выберем вручную оптимизатор. Т5 в оригинальной архитектуре использует Адафактор оптимизатор

optimizer = Adafactor(

model.parameters(),

lr=1e-5,

eps=(1e-30, 1e-3),

clip_threshold=1.0,

decay_rate=-0.8,

beta1=None,

weight_decay=0.0,

relative_step=False,

scale_parameter=False,

warmup_init=False,

)

lr_scheduler = AdafactorSchedule(optimizer)

trainer = Seq2SeqTrainer(

model=model,

args=training_args,

train_dataset = train,

eval_dataset = test,

optimizers = (optimizer, lr_scheduler),

tokenizer = tokenizer,

compute_metrics=compute_metrics

)

trainer.train()

trainer.push_to_hub()

```

#

---

# Пример конвертации массивов для данной сети

#

```python

input_data = ['удач почти отнее отвернулась', 'в хааоде проведения чемпиониавта мира дветысячивосемнандцтая лгодаа']

output_data = ['Удача почти от нее отвернулась', 'в ходе проведения чемпионата мира две тысячи восемнадцатого года']

# Токенизируем входные данные

task_prefix = "Spell correct: "

input_sequences = input_data

encoding = tokenizer(

[task_prefix + sequence for sequence in input_sequences],

padding="longest",

max_length=MAX_INPUT,

truncation=True,

return_tensors="pt",

)

input_ids, attention_mask = encoding.input_ids, encoding.attention_mask

# Токенизируем выходные данные

target_encoding = tokenizer(output_data, padding="longest", max_length=MAX_OUTPUT, truncation=True)

labels = target_encoding.input_ids

# replace padding token id's of the labels by -100

labels = torch.tensor(labels)

labels[labels == tokenizer.pad_token_id] = -100'''

# Конвертируем наши данные в формат dataset

data = Dataset.from_pandas(pd.DataFrame({'input_ids': list(np.array(input_ids)), 'attention_mask': list(np.array(attention_mask)), 'labels': list(np.array(labels))}))

data = data.train_test_split(0.02)

# и получим на вход сети для нашешго trainer: train_dataset = data['train'], eval_dataset = data['test'] |

Rostlab/prot_bert_bfd | Rostlab | "2020-12-11T21:30:10Z" | 401,942 | 14 | transformers | [

"transformers",

"pytorch",

"tf",

"fill-mask",

"protein language model",

"dataset:BFD",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | fill-mask | "2022-03-02T23:29:04Z" | ---

language: protein

tags:

- protein language model

datasets:

- BFD

---

# ProtBert-BFD model

Pretrained model on protein sequences using a masked language modeling (MLM) objective. It was introduced in

[this paper](https://doi.org/10.1101/2020.07.12.199554) and first released in

[this repository](https://github.com/agemagician/ProtTrans). This model is trained on uppercase amino acids: it only works with capital letter amino acids.

## Model description

ProtBert-BFD is based on Bert model which pretrained on a large corpus of protein sequences in a self-supervised fashion.

This means it was pretrained on the raw protein sequences only, with no humans labelling them in any way (which is why it can use lots of

publicly available data) with an automatic process to generate inputs and labels from those protein sequences.

One important difference between our Bert model and the original Bert version is the way of dealing with sequences as separate documents

This means the Next sentence prediction is not used, as each sequence is treated as a complete document.

The masking follows the original Bert training with randomly masks 15% of the amino acids in the input.

At the end, the feature extracted from this model revealed that the LM-embeddings from unlabeled data (only protein sequences) captured important biophysical properties governing protein

shape.

This implied learning some of the grammar of the language of life realized in protein sequences.

## Intended uses & limitations

The model could be used for protein feature extraction or to be fine-tuned on downstream tasks.

We have noticed in some tasks you could gain more accuracy by fine-tuning the model rather than using it as a feature extractor.

### How to use

You can use this model directly with a pipeline for masked language modeling:

```python

>>> from transformers import BertForMaskedLM, BertTokenizer, pipeline

>>> tokenizer = BertTokenizer.from_pretrained('Rostlab/prot_bert_bfd', do_lower_case=False )

>>> model = BertForMaskedLM.from_pretrained("Rostlab/prot_bert_bfd")

>>> unmasker = pipeline('fill-mask', model=model, tokenizer=tokenizer)

>>> unmasker('D L I P T S S K L V V [MASK] D T S L Q V K K A F F A L V T')

[{'score': 0.1165614128112793,

'sequence': '[CLS] D L I P T S S K L V V L D T S L Q V K K A F F A L V T [SEP]',

'token': 5,

'token_str': 'L'},

{'score': 0.08976086974143982,

'sequence': '[CLS] D L I P T S S K L V V V D T S L Q V K K A F F A L V T [SEP]',

'token': 8,

'token_str': 'V'},

{'score': 0.08864385634660721,

'sequence': '[CLS] D L I P T S S K L V V S D T S L Q V K K A F F A L V T [SEP]',

'token': 10,

'token_str': 'S'},

{'score': 0.06227643042802811,

'sequence': '[CLS] D L I P T S S K L V V A D T S L Q V K K A F F A L V T [SEP]',

'token': 6,

'token_str': 'A'},

{'score': 0.06194969266653061,

'sequence': '[CLS] D L I P T S S K L V V T D T S L Q V K K A F F A L V T [SEP]',

'token': 15,

'token_str': 'T'}]

```

Here is how to use this model to get the features of a given protein sequence in PyTorch:

```python

from transformers import BertModel, BertTokenizer

import re

tokenizer = BertTokenizer.from_pretrained('Rostlab/prot_bert_bfd', do_lower_case=False )

model = BertModel.from_pretrained("Rostlab/prot_bert_bfd")

sequence_Example = "A E T C Z A O"

sequence_Example = re.sub(r"[UZOB]", "X", sequence_Example)

encoded_input = tokenizer(sequence_Example, return_tensors='pt')

output = model(**encoded_input)

```

## Training data

The ProtBert-BFD model was pretrained on [BFD](https://bfd.mmseqs.com/), a dataset consisting of 2.1 billion protein sequences.

## Training procedure

### Preprocessing

The protein sequences are uppercased and tokenized using a single space and a vocabulary size of 21.

The inputs of the model are then of the form:

```

[CLS] Protein Sequence A [SEP] Protein Sequence B [SEP]

```

Furthermore, each protein sequence was treated as a separate document.

The preprocessing step was performed twice, once for a combined length (2 sequences) of less than 512 amino acids, and another time using a combined length (2 sequences) of less than 2048 amino acids.

The details of the masking procedure for each sequence followed the original Bert model as following:

- 15% of the amino acids are masked.

- In 80% of the cases, the masked amino acids are replaced by `[MASK]`.

- In 10% of the cases, the masked amino acids are replaced by a random amino acid (different) from the one they replace.

- In the 10% remaining cases, the masked amino acids are left as is.

### Pretraining

The model was trained on a single TPU Pod V3-1024 for one million steps in total.

800k steps using sequence length 512 (batch size 32k), and 200K steps using sequence length 2048 (batch size 6k).

The optimizer used is Lamb with a learning rate of 0.002, a weight decay of 0.01, learning rate warmup for 140k steps and linear decay of the learning rate after.

## Evaluation results

When fine-tuned on downstream tasks, this model achieves the following results:

Test results :

| Task/Dataset | secondary structure (3-states) | secondary structure (8-states) | Localization | Membrane |

|:-----:|:-----:|:-----:|:-----:|:-----:|

| CASP12 | 76 | 65 | | |

| TS115 | 84 | 73 | | |

| CB513 | 83 | 70 | | |

| DeepLoc | | | 78 | 91 |

### BibTeX entry and citation info

```bibtex

@article {Elnaggar2020.07.12.199554,

author = {Elnaggar, Ahmed and Heinzinger, Michael and Dallago, Christian and Rehawi, Ghalia and Wang, Yu and Jones, Llion and Gibbs, Tom and Feher, Tamas and Angerer, Christoph and Steinegger, Martin and BHOWMIK, DEBSINDHU and Rost, Burkhard},

title = {ProtTrans: Towards Cracking the Language of Life{\textquoteright}s Code Through Self-Supervised Deep Learning and High Performance Computing},

elocation-id = {2020.07.12.199554},

year = {2020},

doi = {10.1101/2020.07.12.199554},

publisher = {Cold Spring Harbor Laboratory},

abstract = {Computational biology and bioinformatics provide vast data gold-mines from protein sequences, ideal for Language Models (LMs) taken from Natural Language Processing (NLP). These LMs reach for new prediction frontiers at low inference costs. Here, we trained two auto-regressive language models (Transformer-XL, XLNet) and two auto-encoder models (Bert, Albert) on data from UniRef and BFD containing up to 393 billion amino acids (words) from 2.1 billion protein sequences (22- and 112 times the entire English Wikipedia). The LMs were trained on the Summit supercomputer at Oak Ridge National Laboratory (ORNL), using 936 nodes (total 5616 GPUs) and one TPU Pod (V3-512 or V3-1024). We validated the advantage of up-scaling LMs to larger models supported by bigger data by predicting secondary structure (3-states: Q3=76-84, 8 states: Q8=65-73), sub-cellular localization for 10 cellular compartments (Q10=74) and whether a protein is membrane-bound or water-soluble (Q2=89). Dimensionality reduction revealed that the LM-embeddings from unlabeled data (only protein sequences) captured important biophysical properties governing protein shape. This implied learning some of the grammar of the language of life realized in protein sequences. The successful up-scaling of protein LMs through HPC to larger data sets slightly reduced the gap between models trained on evolutionary information and LMs. Availability ProtTrans: \<a href="https://github.com/agemagician/ProtTrans"\>https://github.com/agemagician/ProtTrans\</a\>Competing Interest StatementThe authors have declared no competing interest.},

URL = {https://www.biorxiv.org/content/early/2020/07/21/2020.07.12.199554},

eprint = {https://www.biorxiv.org/content/early/2020/07/21/2020.07.12.199554.full.pdf},

journal = {bioRxiv}

}

```

> Created by [Ahmed Elnaggar/@Elnaggar_AI](https://twitter.com/Elnaggar_AI) | [LinkedIn](https://www.linkedin.com/in/prof-ahmed-elnaggar/)

|

MCG-NJU/videomae-base | MCG-NJU | "2024-03-29T08:02:16Z" | 400,195 | 32 | transformers | [

"transformers",

"pytorch",

"safetensors",

"videomae",

"pretraining",

"vision",

"video-classification",

"arxiv:2203.12602",

"arxiv:2111.06377",

"license:cc-by-nc-4.0",

"endpoints_compatible",

"region:us"

] | video-classification | "2022-08-03T09:27:59Z" | ---

license: "cc-by-nc-4.0"

tags:

- vision

- video-classification

---

# VideoMAE (base-sized model, pre-trained only)

VideoMAE model pre-trained on Kinetics-400 for 1600 epochs in a self-supervised way. It was introduced in the paper [VideoMAE: Masked Autoencoders are Data-Efficient Learners for Self-Supervised Video Pre-Training](https://arxiv.org/abs/2203.12602) by Tong et al. and first released in [this repository](https://github.com/MCG-NJU/VideoMAE).

Disclaimer: The team releasing VideoMAE did not write a model card for this model so this model card has been written by the Hugging Face team.

## Model description

VideoMAE is an extension of [Masked Autoencoders (MAE)](https://arxiv.org/abs/2111.06377) to video. The architecture of the model is very similar to that of a standard Vision Transformer (ViT), with a decoder on top for predicting pixel values for masked patches.

Videos are presented to the model as a sequence of fixed-size patches (resolution 16x16), which are linearly embedded. One also adds a [CLS] token to the beginning of a sequence to use it for classification tasks. One also adds fixed sinus/cosinus position embeddings before feeding the sequence to the layers of the Transformer encoder.

By pre-training the model, it learns an inner representation of videos that can then be used to extract features useful for downstream tasks: if you have a dataset of labeled videos for instance, you can train a standard classifier by placing a linear layer on top of the pre-trained encoder. One typically places a linear layer on top of the [CLS] token, as the last hidden state of this token can be seen as a representation of an entire video.

## Intended uses & limitations

You can use the raw model for predicting pixel values for masked patches of a video, but it's mostly intended to be fine-tuned on a downstream task. See the [model hub](https://huggingface.co/models?filter=videomae) to look for fine-tuned versions on a task that interests you.

### How to use

Here is how to use this model to predict pixel values for randomly masked patches:

```python

from transformers import VideoMAEImageProcessor, VideoMAEForPreTraining

import numpy as np

import torch

num_frames = 16

video = list(np.random.randn(16, 3, 224, 224))

processor = VideoMAEImageProcessor.from_pretrained("MCG-NJU/videomae-base")

model = VideoMAEForPreTraining.from_pretrained("MCG-NJU/videomae-base")

pixel_values = processor(video, return_tensors="pt").pixel_values

num_patches_per_frame = (model.config.image_size // model.config.patch_size) ** 2

seq_length = (num_frames // model.config.tubelet_size) * num_patches_per_frame

bool_masked_pos = torch.randint(0, 2, (1, seq_length)).bool()

outputs = model(pixel_values, bool_masked_pos=bool_masked_pos)

loss = outputs.loss

```

For more code examples, we refer to the [documentation](https://huggingface.co/transformers/main/model_doc/videomae.html#).

## Training data

(to do, feel free to open a PR)

## Training procedure

### Preprocessing