ENKO-T5-SMALL-V0

This model is for English to Korean Machine Translator, which is based on T5-small architecture, but trained from scratch.

Code

The training code is from my lecture(LLM을 위한 김기현의 NLP EXPRESS), which is published on FastCampus. You can check the training code in this github repo.

Dataset

The training dataset for this model is mainly from AI-Hub. The dataset consists of 11M parallel samples.

Tokenizer

I use Byte-level BPE tokenizer for both source and target language. Since it covers both languages, tokenizer vocab size is 60k.

Architecture

The model architecture is based on T5-small, which is popular encoder-decoder model architecture. Please, note that this model is trained from-scratch, not fine-tuned.

Evaluation

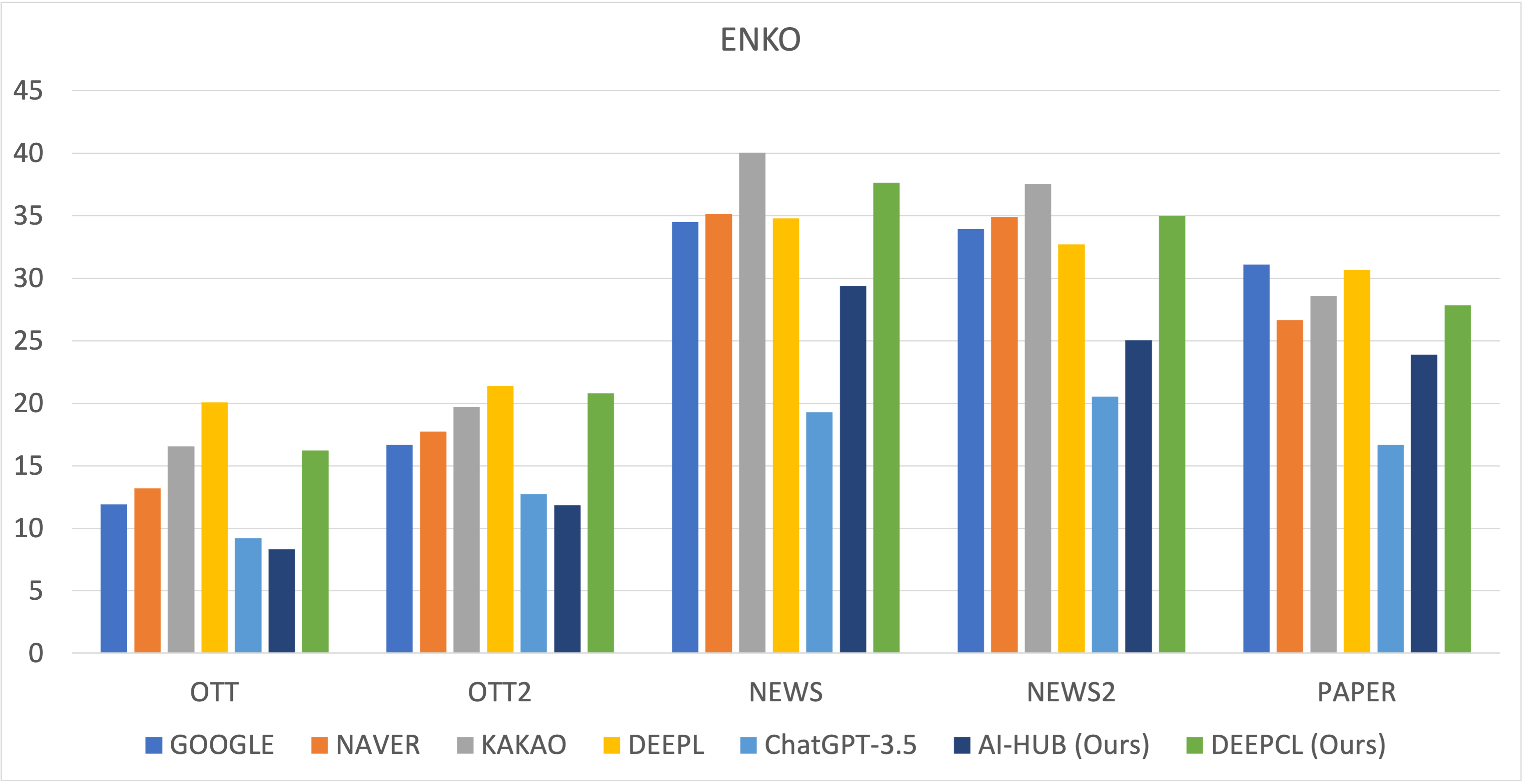

I conducted the evaluation with 5 different test sets. Following figure shows BLEU scores on each test set.

DEEPCL model is private version of this model, which is trained on much more data.

Contact

Kim Ki Hyun (nlp.with.deep.learning@gmail.com)

- Downloads last month

- 12